Neo4j Announces Strategic Collaboration with AWS to Tackle Generative AI Hallucinations

In Brief

Neo4j and AWS’s strategic collaboration includes integration with Amazon Bedrock for reducing hallucinations in enterprise generative AI.

Graph database and analytics platform Neo4j recently entered a multi-year strategic collaboration agreement (SCA) with Amazon Web Services (AWS), that aims to elevate generative artificial intelligence (AI) results. The collaboration will focus on integrating with enterprise solution Amazon Bedrock — to enhance accuracy, transparency and explainability while mitigating AI hallucinations.

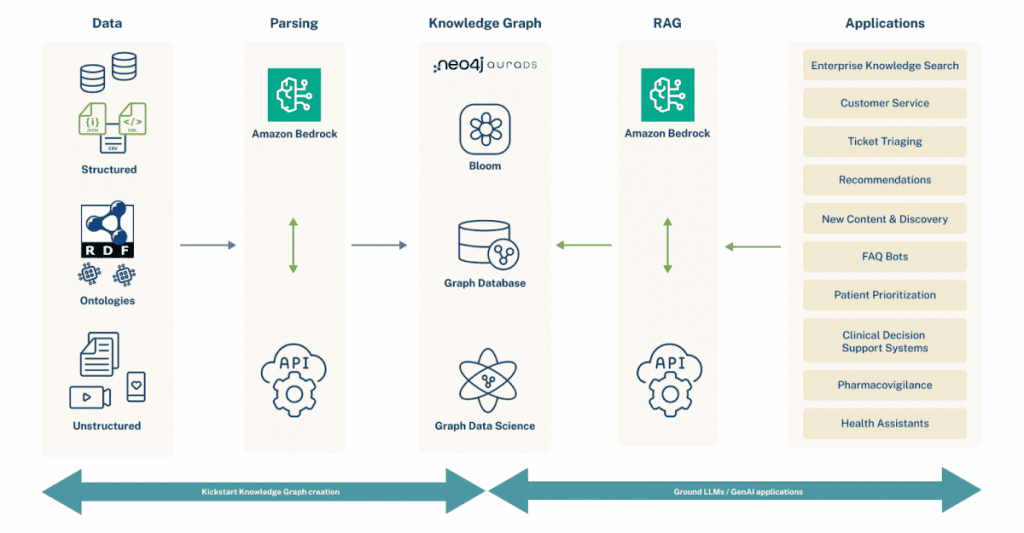

The collaborative effort will address a common challenge faced by developers dealing with large language models (LLMs), as they require enduring memory rooted in specific enterprise data and domains. Neo4j’s combination of knowledge graphs and native vector search aims to provide a solution to this predicament.

“Neo4j’s native vector search allows generative AI applications using LLMs to conduct semantic search conveniently against vectors stored as properties in the same database as the knowledge graph. The results can then be combined with facts from surrounding nodes in the knowledge graph, providing the full context for answers that are more complete, accurate, relevant and explainable,” Sudhir Hasbe, Chief Product Officer at Neo4j told Metaverse Post.

In conjunction with this announcement, Neo4j has made its fully managed graph database offering Neo4j Aura Professional, available on the AWS Marketplace. The move will streamline the experience for developers working on generative AI by offering a rapid-start platform.

AWS Marketplace is a digital catalog housing numerous software listings, facilitates the discovery, testing, purchase and deployment of software compatible with AWS.

“The general availability of Neo4j Aura Professional on the AWS Marketplace allows developers to create their first knowledge graph using their existing AWS accounts and consume existing AWS commits. This simplifies signup, billing, and spend monitoring,” said Neo4j’s Hasbe.

Leveraging Native Vector Search For Effective LLM Outcomes

Neo4j asserts that the graph database platform’s capabilities extend beyond traditional contemporaries. Utilizing native vector search it captures both explicit and implicit relationships, enabling the creation of knowledge graphs. These graphs in turn, empower AI systems to reason, infer and retrieve pertinent information effectively.

Neo4j functions as an enterprise database, grounding LLMs and serving as a long-term memory for generative AI systems.

The integration with Amazon Bedrock now introduces several advantages. Employing Retrieval Augmented Generation (RAG), Neo4j in collaboration with Langchain and Amazon Bedrock, creates virtual assistants grounded in enterprise knowledge. This aims to reduce hallucinations, ensuring more accurate, transparent and explainable results.

“Retrieval-augmented generation (RAG) is a generative AI development best practice for overcoming the limitations of LLMs by grounding the models on external sources of knowledge. In this approach, the LLM serves as a natural language interface to access external information, thereby not relying only on its internal knowledge to produce answers,'” Neo4j’s Hasbe told Metaverse Post. “Now AWS developers can easily implement RAG using the integration of Neo4j with Amazon Bedrock, which provides a single API for the collection of the world’s most popular LLMs.”

Additionally, Neo4j’s knowledge graphs when integrated with Amazon Bedrock, invoke a diverse ecosystem of foundation models. This enables the generation of highly personalized text and summarization for end-users.

Developers can leverage Amazon Bedrock to generate vector embeddings from unstructured data, enriching knowledge graphs using Neo4j’s new vector search and store capability and also use Amazon Bedrock’s generative AI capabilities to process unstructured data.

“Combining the generative AI power of Amazon Bedrock with Neo4j knowledge graphs can bring institutional knowledge to every team member. This combination also unlocks value from unstructured data, providing complete answers grounded in facts, not hallucinations,” explained Neo4j’s Hasbe. “The key is that Bedrock’s capabilities are augmented by Neo4j’s factual knowledge graphs. This produces accurate, contextual responses instead of LLM guesses.”

This strategic collaboration signifies a pivotal moment in the convergence of graph technology and cloud computing excellence, propelling enterprises into a new era of AI innovation and the optimized utilization of connected data.

“Neo4j serves as long-term memory for large language models built on AWS. Through our Aura cloud offering on AWS Marketplace and the robust functionalities of our graph database, we are streamlining the journey for developers, empowering them to effortlessly get started and be productive on the practical applications of real-world generative AI in the cloud,” Neo4j’s Hasbe told Metaverse Post. “Our collaboration seeks to help customers unlock novel opportunities and reweave the fabric of possibility in the realm of enterprise innovation.”

Disclaimer

In line with the Trust Project guidelines, please note that the information provided on this page is not intended to be and should not be interpreted as legal, tax, investment, financial, or any other form of advice. It is important to only invest what you can afford to lose and to seek independent financial advice if you have any doubts. For further information, we suggest referring to the terms and conditions as well as the help and support pages provided by the issuer or advertiser. MetaversePost is committed to accurate, unbiased reporting, but market conditions are subject to change without notice.

About The Author

Victor is a Managing Tech Editor/Writer at Metaverse Post and covers artificial intelligence, crypto, data science, metaverse and cybersecurity within the enterprise realm. He boasts half a decade of media and AI experience working at well-known media outlets such as VentureBeat, DatatechVibe and Analytics India Magazine. Being a Media Mentor at prestigious universities including the Oxford and USC and with a Master's degree in data science and analytics, Victor is deeply committed to staying abreast of emerging trends. He offers readers the latest and most insightful narratives from the Tech and Web3 landscape.

More articles

Victor is a Managing Tech Editor/Writer at Metaverse Post and covers artificial intelligence, crypto, data science, metaverse and cybersecurity within the enterprise realm. He boasts half a decade of media and AI experience working at well-known media outlets such as VentureBeat, DatatechVibe and Analytics India Magazine. Being a Media Mentor at prestigious universities including the Oxford and USC and with a Master's degree in data science and analytics, Victor is deeply committed to staying abreast of emerging trends. He offers readers the latest and most insightful narratives from the Tech and Web3 landscape.