Top 15 GPT-4 and GPT-3 Chatbots: Talk with AI, ask questions

GPT-3 has a wide range of applications because of its potent text creation capabilities. GPT-3 is used for generating creative writing that imitates the writing styles of Shakespeare, Edgar Allen Poe, and other well-known authors. It can be used for creating blog posts, advertising copy, and even poetry.

| Pro Tips |

|---|

| Are you a Telegram user? Check out these AI bots to talk with a world-class chatbot right now. |

| Also, to experience the potential of AI, we suggest playing these 10 Best Games to Play with ChatGPT. |

| Whether you’re a freelancer, small business owner, or just looking for a side hustle, ChatGPT can help you reach your financial goals. |

GPT-3 can write functional code that can be executed with just a few snippets of example code text, as programming code is nothing more than a type of text. Additionally, GPT-3 has been effectively applied to create website mockups. One developer has merged the UI prototyping software Figma with GPT-3 to enable the creation of webpages with only a small amount of recommended text. Even website clones have been created with GPT-3 by using a URL as recommended text. In addition to creating code snippets, regular expressions, plots, and charts from text descriptions, Excel functions, and other development software, developers use GPT-3 in a variety of ways.

In the gaming industry, GPT-3 is also used to generate realistic chat dialogue, quizzes, images, and other graphics from text suggestions. It can also produce comic strips, recipes, and memes.

| Related article: 100 Best ChatGPT Prompts to Unleash AI’s Potential |

In this post, we’ll be sharing the top 15 GPT-3 playgrounds. With them, you’ll be able to talk with AI chatbots and ask them questions. GPT-3 is designed to be a more user-friendly and generalizable version of GPT-2. The goal of GPT-3 is to eventually be able to answer any question that a person might ask. To date, GPT-3 has been used to create digital assistants, question-answering chatbots, and even a chatbot that can read and answer questions about the news.

If you’re interested in talking with AI chatbots and asking them questions, then these playgrounds are definitely for you!

AI Buddy

Chat with AI powered by GPT-3 using WhatsApp. GPT-3 is used by AI Buddy to carry on a respectable conversation (users have to bring their own OpenAI keys). It presently has a memory of the last five conversations. There are many improvements anticipated soon.

- The next plan is to build this for Telegram because running this for WhatsApp is somewhat expensive for both the creator and the users.

- Allow users to select distinct AI personas.

- Allow users to specify how long they want the bot to remember their conversations.

- Allow users to submit their own data so that GPT-3 can be adjusted for a specific bot. A bot trained on a writer’s or even your own writing, for instance.

| Related article: Top 10 Benefits of AI Chatbots for Businesses |

AskBrian

Brian is a safe multifunctional digital assistant for consultants and business professionals. He can translate entire documents into 100 languages, create company profiles for millions of businesses, convert documents to other formats, transcribe audio and video recordings to Word… and he is constantly learning new skills to help you save time and money. The first GPT-3-powered skill is “Ask Anything,” which allows you to ask anything. Brian responds within three minutes of receiving an email and communicating in a human-like manner via MS Teams and Email. Brian is immediately available with all of his skills and integrations. AskBrian also provides customized digital assistant solutions—Brian for Enterprise.

| Related article: 11-year-old boy’s game for ChatGPT is blowing up the internet |

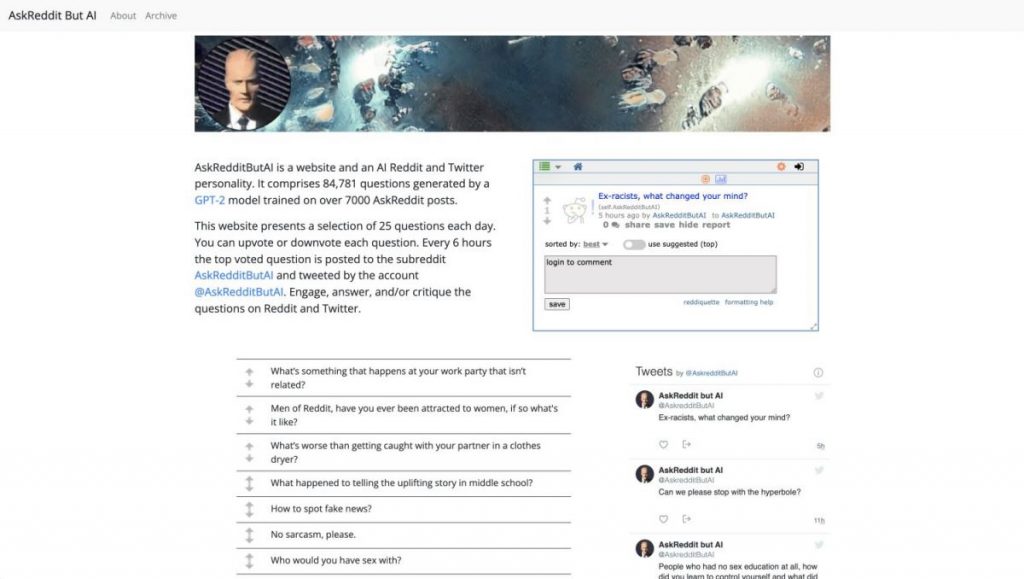

AskReddit But AI

AskRedditButAI is a website as well as an artificial intelligence Reddit and Twitter personality. It is made up of 84,781 questions generated by a GPT-2 model that was trained on over 7,000 AskReddit posts. Every day, a new set of 25 questions is presented on this website. Each question can be upvoted or downvoted. The top-voted question is posted to the subreddit AskRedditButAI every six hours and tweeted by the account @AskRedditButAI. AskRedditButAI is an investigation into networked living and the posthuman.

| Related article: Meet ChatGPT: The AI that can kill Google |

GPT-3 Satoshi

Satoshi Nakamoto’s texts were used to train AI GPT-3 Satoshi. Plain text (376 kb) with the date and context metadata. The whitepaper, web, Bitcointalk, and public and private emails are all included.

GPT-4chan

GPT-4chan is a text generator that has been trained on 4chan’s /pol/ board. The creator honed the GPT-J language model over three and a half years by analyzing more than 134.5 million postings on /pol/. The board’s thread structure was incorporated into his program. As a result, an artificial intelligence was created that could post to /pol/ in the same way that a human would.

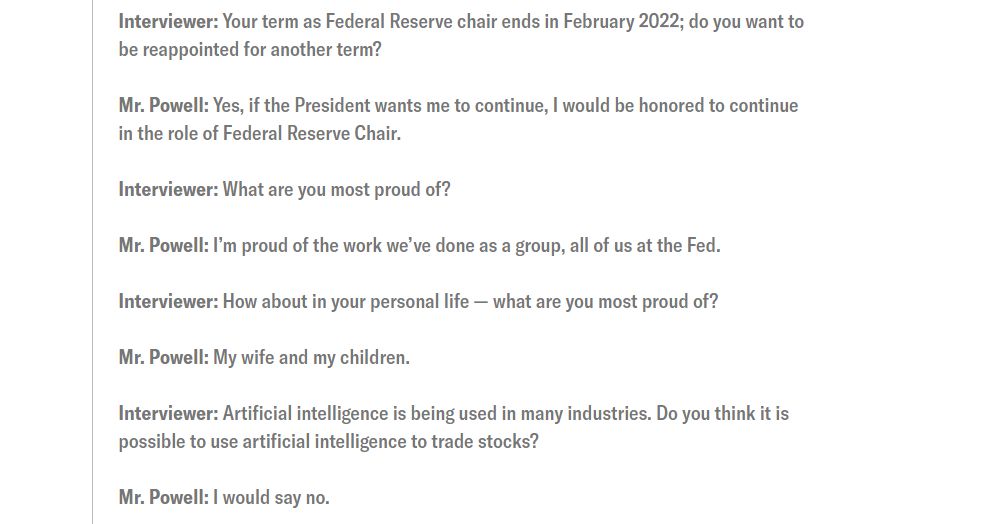

Jerome Powell Bot

Jason Rohrer is a multi-talented game designer, computer programmer, musician, writer, and AI researcher. He created Jerome Powell Bot, a conversational AI system, last summer. It is based on OpenAI’s GPT-3, a powerful autoregressive language model trained on thousands of digital books, the entirety of Wikipedia, and nearly a trillion words posted on the internet to produce text that is remarkably human-like.

Kibo

Kibo is based on GPT-3, the OpenAI wonder, and is capable of making suggestions and holding a type of speech.

| Related article: America’s education system is in dire need of 300k teachers — but ChatGPT could be the answer |

Project December

Chatbots made by Project December using GPT-3 are extremely lifelike. GPT-3 can mimic human writing by devouring enormous quantities of human-written text (Reddit threads were especially helpful in this regard). It can produce anything from academic papers to messages from ex-lovers.

Emerson

Emerson is a great conversationalist who is always able to teach you something new. It was developed using the GPT-3 language model by Quickchat.ai. Use it to learn other languages, look up information, or just have a lighthearted conversation. Emerson is able to understand uploaded photographs and speak your local tongue!

FAQ

A neural network machine learning model trained using internet data called GPT-3, or the third generation Generative Pre-trained Transformer, can produce any kind of text. It was created by OpenAI, and it only needs a tiny quantity of text as input to produce huge amounts of accurate and complex machine-generated text.

GPT-3 has been used to generate vast amounts of high-quality copy with only a tiny amount of input text, including articles, poems, stories, news reports, and conversations.

A language prediction model is GPT-3. This indicates that it has a machine learning neural network model that can take text as an input and transform it into the outcome it thinks will be most helpful. This is done by teaching the system to recognize patterns in the massive amount of text on the internet. GPT-3 is the third iteration of a model that is primarily used for text generation after being pre-trained on a substantial amount of text.

GPT-3 offers a good option where a huge volume of text needs to be created by a machine from a small amount of text input. There are many instances when having a human on hand to provide text output is not possible or expedient or where robotic text synthesis that appears human might be required. Sales teams can use GPT-3 to communicate with potential customers, customer service centers can use it to respond to customer inquiries or support chatbots, and marketing teams can use it to create a copy.

Despite being extraordinarily big and strong, GPT-3 has a number of restrictions and concerns that come with using it. The main problem is that GPT-3 is not always learning. It has been pre-trained, so it lacks an ongoing long-term memory that continuously learns from every interaction. The same issues that plague all neural networks also affect GPT-3, including its inability to interpret and explain why certain inputs lead to particular outputs.

Read more about GPT-3:

Disclaimer

In line with the Trust Project guidelines, please note that the information provided on this page is not intended to be and should not be interpreted as legal, tax, investment, financial, or any other form of advice. It is important to only invest what you can afford to lose and to seek independent financial advice if you have any doubts. For further information, we suggest referring to the terms and conditions as well as the help and support pages provided by the issuer or advertiser. MetaversePost is committed to accurate, unbiased reporting, but market conditions are subject to change without notice.

About The Author

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.

More articles

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.