Databricks Announces Dolly, Another “Budget” Open-Source ChatGPT Competitor

In Brief

Dolly is an Alpaca clone created by Databricks to democratize access to large language models.

It can be trained on a small amount of information, spending only $30 and 3 hours of work.

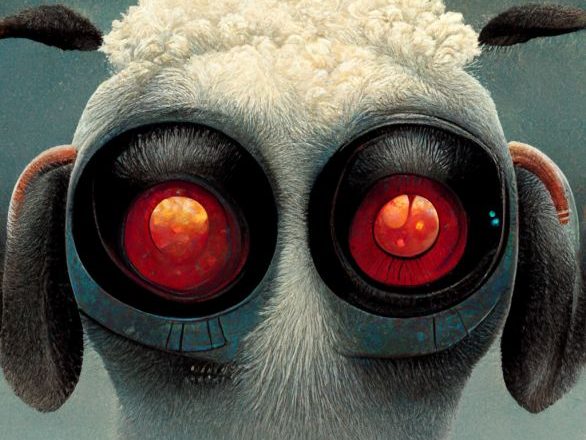

Last week, there was talk about a ChatGPT-like product called Alpaca, whose training costs hundreds of times less than OpenAI models due to the use of synthetic information generated using GPT. Now meet Dolly, an Alpaca clone named after Dolly the sheep clone, whose mission is to democratize access to large language models. Programmers at Databricks say that Dolly can be trained on a small amount of information for only $30 and 3 hours of work. In this case, you do not need a supercomputer for several tens of thousands of dollars.

Dolly is expected to be a game-changer in the field of natural language processing, as it will allow small businesses and individuals to develop their own language models without breaking the bank. This democratization of access to large language models could lead to a surge in innovation and creativity in the field of AI.

Dolly was created based on the 2020 Eleuther language model, which has only 6 billion parameters compared to 135 billion in GPT. The old model was modified with the help of the information received from the Alpaca mentioned above, and the ability to follow user prompts was achieved, which was not in the original version. Now it can work as a chatbot, generate text, and brainstorm on a given topic.

Despite having only 6 billion parameters, Dolly has shown impressive performance in language tasks and has the potential to be a more efficient and cost-effective alternative to larger models like GPT. With further development and training, Dolly could become a valuable tool for natural language processing applications.

From this, Databricks makes the assumption that the coolness of the same ChatGPT is precisely in the quality of the information on which the chatbot was trained and not in the technical advancement of the model itself. After all, Dolly, the developers explain, learned similar abilities in a very short time, although not at such a high level. This highlights the importance of high-quality training data in building effective chatbots. It also suggests that technical advancements in natural language processing may not always be the key factor in improving chatbot performance.

- OpenAI’s ChatGPT language model is adding new plugins to expand its capabilities. These plugins connect ChatGPT to third-party applications, allowing it to retrieve real-time and knowledge-based information, as well as perform actions like booking a flight or ordering food. Developers can access documentation to build their plugins, and ChatGPT acts as an intelligent API caller by using the API specification and natural language descriptions.

Read more related topics:

Disclaimer

In line with the Trust Project guidelines, please note that the information provided on this page is not intended to be and should not be interpreted as legal, tax, investment, financial, or any other form of advice. It is important to only invest what you can afford to lose and to seek independent financial advice if you have any doubts. For further information, we suggest referring to the terms and conditions as well as the help and support pages provided by the issuer or advertiser. MetaversePost is committed to accurate, unbiased reporting, but market conditions are subject to change without notice.

About The Author

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.

More articles

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.