Nvidia’s Grace Hopper Superchips Are Now in Production

In Brief

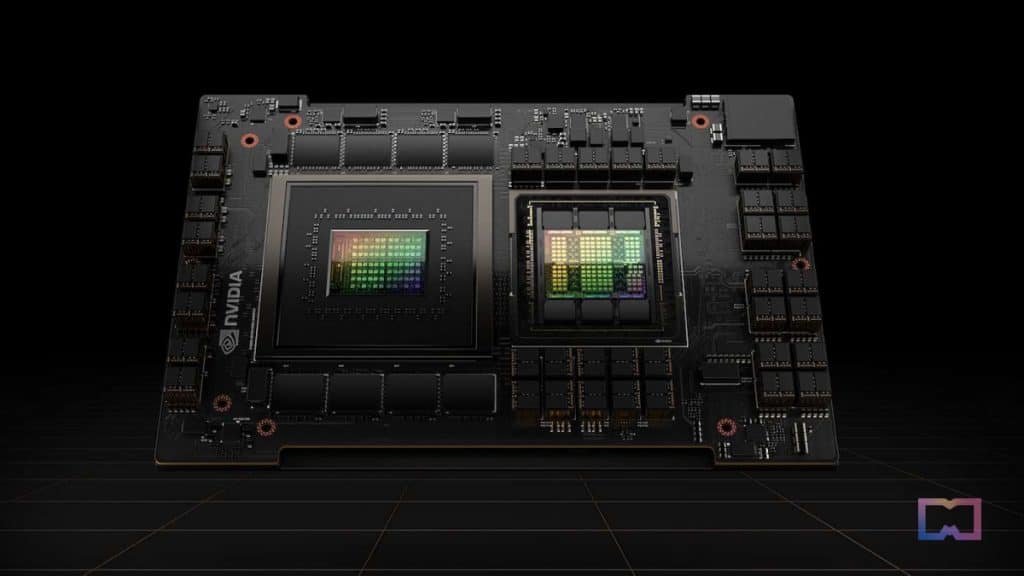

Nvidia announced that the Nvidia GH200 Grace Hopper Superchip is currently in full production.

The chips will power complex AI systems.

Nvidia announced that the production of the Nvidia GH200 Grace Hopper Superchip has started. The chip will power systems that run complex AI programs.’

The GH200-powered systems, which include more than 400 system configurations based on Nvidia’s latest CPU and GPU architectures like Grace, Hopper, and Ada Lovelace, are also targeted and high performance computing (HPC) workloads. They are reportedly scheduled to hit the shelves later this year.

At the Computex exhibition in Taiwan, Jensen Huang, CEO of Nvidia, revealed new systems, partners, and additional information about the GH200 Grace Hopper Superchip. It combines the Armbased Nvidia Grace CPU and Hopper GPU architectures with Nvidia NVLink-C2C interconnect technology.

Higher than 900GB/s in total bandwidth, it delivers up to seven times higher bandwidth than the standard PCIe Gen5 lanes found in traditional accelerated systems, providing incredible compute capability for the most demanding generative AI and HPC applications.

“Generative AI is rapidly transforming businesses, unlocking new opportunities and accelerating discovery in healthcare, finance, business services and many more industries. With Grace Hopper Superchips in full production, manufacturers worldwide will soon provide the accelerated infrastructure enterprises need to build and deploy generative AI applications that leverage their unique proprietary data,” said the vice president of accelerated computing at Nvidia, Ian Buck.

Global hyperscalers and supercomputing centers in Europe and the U.S. are among the customers that will have access to GH200-powered systems. Global system manufacturers, including Aeon, Advantech, Aetina, ASRock Rack, Asus, Gigabyte, Ingrasys, Inventec, Pegatron, QCT, Tyan, Wistron and Wiwynn, will be using this technology.

Global server manufacturers Cisco, Dell Technologies, Hewlett Packard Enterprise, Lenovo, Supermicro, and Eviden, an Atos company, offer a broad variety of Nvidia-accelerated systems. Then, cloud partners for Nvidia H100 include Amazon Web Services, Cirrascale, CoreWeave, Google Cloud, Lambda, Microsoft Azure, Oracle Cloud Infrastructure, Paperspace and Vultr. More than 100 frameworks, pretrained models, and development tools are provided by Nvidia AI Enterprise, a software layer of the Nvidia AI platform. Among them are generative AI, computer vision, and speech AI.

Read more related articles:

- Hair product brand Olaplex enters Decentraland metaverse with a virtual influencer

- DeepMind released the AI tool Dramatron, which generates a full-fledged draft of a movie or TV show script

- Meta Creates Product Team Focused on Generative AI; Elon Musk Assembles Team to Build ChatGPT Rival

Disclaimer

In line with the Trust Project guidelines, please note that the information provided on this page is not intended to be and should not be interpreted as legal, tax, investment, financial, or any other form of advice. It is important to only invest what you can afford to lose and to seek independent financial advice if you have any doubts. For further information, we suggest referring to the terms and conditions as well as the help and support pages provided by the issuer or advertiser. MetaversePost is committed to accurate, unbiased reporting, but market conditions are subject to change without notice.

About The Author

Valeria is a reporter for Metaverse Post. She focuses on fundraises, AI, metaverse, digital fashion, NFTs, and everything web3-related. Valeria has a Master’s degree in Public Communications and is getting her second Major in International Business Management. She dedicates her free time to photography and fashion styling. At the age of 13, Valeria created her first fashion-focused blog, which developed her passion for journalism and style. She is based in northern Italy and often works remotely from different European cities. You can contact her at [email protected]

More articles

Valeria is a reporter for Metaverse Post. She focuses on fundraises, AI, metaverse, digital fashion, NFTs, and everything web3-related. Valeria has a Master’s degree in Public Communications and is getting her second Major in International Business Management. She dedicates her free time to photography and fashion styling. At the age of 13, Valeria created her first fashion-focused blog, which developed her passion for journalism and style. She is based in northern Italy and often works remotely from different European cities. You can contact her at [email protected]