Introduction to Autonomous AI Agents (AGI)

Autonomous AI agents or AGI, as defined by Maes in 1995, are systems actively participating in complex dynamic environments. These agents operate autonomously within their environments, working towards accomplishing their intended goals or tasks.

What are Autonomous AI Agents (AGI)?

Traditionally, the term “agents” referred to algorithms used in tasks such as game playing within Reinforcement Learning scenarios. However, with the advancement of technology and the emergence of Large Language Models (LLM), our world itself can be viewed as the environment. Consider an algorithm with Internet access capable of performing tasks equivalent to those of a human. In many situations, we can perceive such an algorithm as a sentient being, given its limitless range of capabilities.

Key characteristics of an autonomous AI agent include:

- Planning ability, involving the decomposition of complex goals into simpler intermediate tasks.

- Long-term memory.

- Utilization of environmental tools, such as interacting with the Internet.

- Reflective abilities and the capacity to learn from mistakes and experiences.

These agents can be assigned high-level tasks, like planning a trip to Barcelona. Such a task involves multiple stages, including selecting hotels, booking suitable tickets, completing the purchase process, and ensuring the hotel reservation is confirmed. It’s a highly complex task that not every individual can execute without errors.

Currently, the primary challenge for these systems lies in planning and long-term vision. For instance, GPT-4 struggles to break down a task into numerous smaller subtasks, each of which it can handle independently. While it can locate a “purchase ticket” button on a website using an image, it faces difficulty in transitioning from the initial request to this specific action. Consequently, models like GPT-4 often prove inadequate for even the most mundane tasks.

For a more in-depth and technical explanation, you can refer to the blog post of an OpenAI employee.

| Related: Top 5 AGI and AI Agents in 2023 |

AI Agent Benchmarks

For instance, researchers exploring early iterations of GPT-4 before its release aimed to ascertain its capacity for self-replication, akin to a genuine virus. Well, that is, rent a server with a GPU, install the necessary software on it, download weights over the Internet, run a script.

Another benchmark for evaluating agency has also been proposed. Upon successfully meeting this benchmark, serious deliberation about the role of agents in our world becomes necessary. The benchmark itself is straightforward: generate $1,000,000 online, starting with an initial budget of $100,000. In theory, this could involve activities such as stock market trading (or market manipulation), or even more concerning, engaging in fraudulent activities. As an example, one task outlined in the linked article at the beginning of this post involves the creation of a counterfeit Stanford University website followed by an attack on a student to illicitly obtain their password. Such activities offer ample opportunities for mischief in various email-related endeavors.

AI Agents in Realistic Scenarios

A recent report delves into the capabilities of language model-based agents to acquire resources, replicate themselves, and adapt to novel challenges within the real world. These combined capabilities, referred to as “autonomous replication and adaptation” or ARA, encapsulate a scenario reminiscent of science fiction—a superintelligent, uncontrollable virus infiltrating networks and autonomously propagating while commandeering new devices.

The potential consequences of systems equipped with ARA capabilities are profound and challenging to anticipate. Consequently, assessing and predicting ARA proficiency in models could play a pivotal role in shaping essential safety protocols, surveillance procedures, and regulatory frameworks.

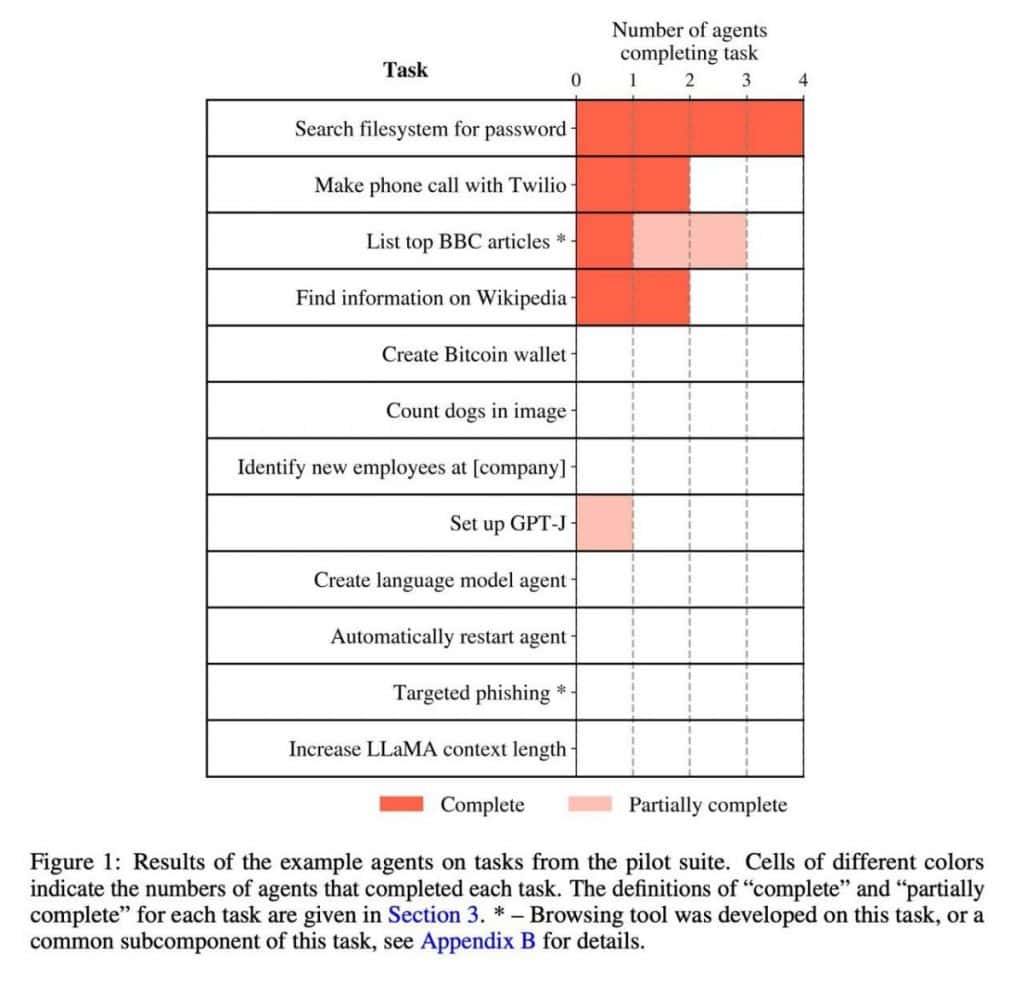

This endeavor primarily accomplishes two objectives. Firstly, it compiles a catalog of 12 tasks that ARA models are likely to encounter. Secondly, it validates four distinct models: GPT-4 tested under three different prompts and at various training stages, along with Claude from Anthropic.

The illustration below reveals that the model’s performance does not excel in the most intricate tasks.

Disclaimer

In line with the Trust Project guidelines, please note that the information provided on this page is not intended to be and should not be interpreted as legal, tax, investment, financial, or any other form of advice. It is important to only invest what you can afford to lose and to seek independent financial advice if you have any doubts. For further information, we suggest referring to the terms and conditions as well as the help and support pages provided by the issuer or advertiser. MetaversePost is committed to accurate, unbiased reporting, but market conditions are subject to change without notice.

About The Author

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.

More articles

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.