Google introduces its new AI model for HD video creation, empowered by Imagen and Phenaki

In Brief

Google unveils new AI model for HD video creation

AI-powered video can help bridge the gap between people of different cultures

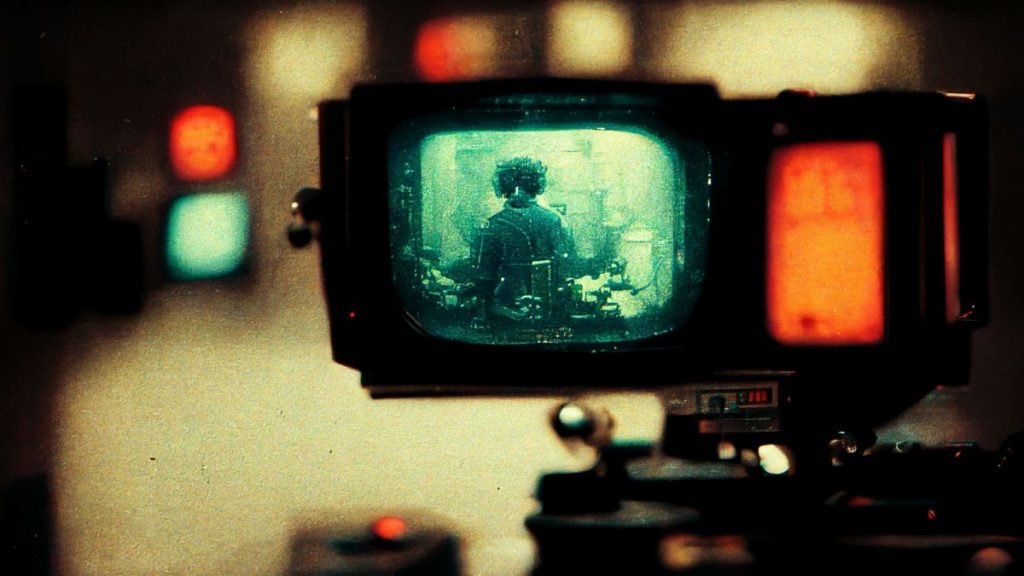

Google’s new AI model for HD video creation is a game-changer for the industry. It is a deep-learning model that can generate high-resolution videos from text inputs. This is a significant advancement in the field of video creation, as it will enable creators to produce videos of a much higher quality without the need for expensive equipment or hours of painstaking work.

You may now make long films with one or more text prompts using Google’s new AI model for HD video creation, which combines Google Imagen and Phenaki. A huge language model is used by Phenaki, “a model capable of realistic video synthesis given a sequence of text hints,” to produce tokens over time that the AI then uses to construct a long coherent story.

Imagine that the screenplay is broken up into prompts, that one neural network keeps track of their connectedness, that a second neural network creates short movies, and that the AI then “edits” them with long shots.

Using video, images, and design, people from all cultures may be able to express themselves in ways they previously couldn’t thanks to AI-powered generative models. Our researchers have been working hard to create models that are the best in the industry at producing images that human raters prefer above those produced by other models. Recent significant developments include the application of our diffusion model to video sequences and the creation of lengthy, cohesive films in response to a series of text cues. We can combine these methods to create video; today, we’re providing super-resolution footage created by AI for the first time.

Jeff Dean,

Google Senior Fellow and SVP, Google Research

The model works by first creating a low-resolution version of the video, which is then upscaled using a specialized algorithm. This algorithm is able to retain the details and sharpness of the original video while also adding new details that were not present in the low-resolution version.

This is an exciting development for those in the video creation industry, and it is sure to have a major impact on the quality of videos that are produced in the future.

Read related posts:

Disclaimer

In line with the Trust Project guidelines, please note that the information provided on this page is not intended to be and should not be interpreted as legal, tax, investment, financial, or any other form of advice. It is important to only invest what you can afford to lose and to seek independent financial advice if you have any doubts. For further information, we suggest referring to the terms and conditions as well as the help and support pages provided by the issuer or advertiser. MetaversePost is committed to accurate, unbiased reporting, but market conditions are subject to change without notice.

About The Author

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.

More articles

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.