TextMesh: A New Stable Diffusion-Based Text-to-3D Model From Google

In Brief

TextMesh is a new text-to-3D work from Google that improves the now fashionable approach of using Stable Diffusion to generate different angles of the same basic prompt (2D picture) and then a 3D mesh is assembled from this using NeRF.

Recently, the ability to generate 2D images from text prompts has seen great success due to the work of diffusive image generation models. These models are highly capable of producing high-quality image samples given a text prompt, allowing for a simple text-to-image interface. Building on these advancements in the field of 2D image generation, the big question in this industry is whether it is possible to apply similar diffusion models to generate 3D models from text.

And now Google has introduced a new text-to-3D method with the sleek name TextMesh. This method promises to improve the now fashionable approach of Stable Diffusion-based text-to-3D model generation. At its core, multiple angles are generated by feeding a basic 2D input into the model. Then the results are processed and assimilated into a 3D mesh using the Neural Radiance Fields (NeRF) approach.

| Recommended: Prompt Engineering Ultimate Guide 2023: |

The advantages of this innovative approach over the currently trendy DreamFusion and CLIPMesh are, foremost, the user-friendly output. Rather than using the challenging NeRF format, TextMesh provides 3D mesh with textures, thus making it much more applicable to real-world uses. Additionally, the approach avoids the often-encountered high saturation effect of other models and manages to increase details.

The model works by first forming a 3D mesh from an input image with the help of NeRF. The results then pass through the SDF (Signed Distance Fields) framework to further refine the texture, improving the overall clarity of the output mesh. Not to mention, the SDF framework helps in avoiding the oversaturation effect that other 3D models usually suffer from.

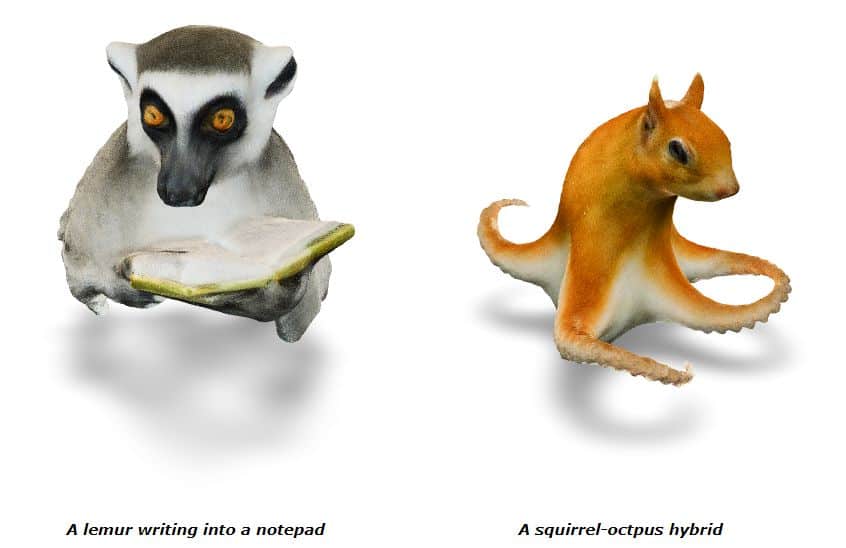

3D meshes created as an example

This approach takes advantage of the success of DiG models and extends NeRF networks to be based on an SDF backbone. This produces improved 3D mesh extraction capabilities and much more realistic-looking 3D meshes compared to the previously discussed methods.

The results from using TextMesh are extremely convincing. The authors even provide a link to a picture of a squirrel created using their model, which is nothing less than impressive.

TextMesh proves itself to be a revolutionary new 3D model that offers a plethora of advantages and can produce extremely realistic 3D meshes. Its use is bound to become more and more popular in the near future.

Read more about AI:

Disclaimer

In line with the Trust Project guidelines, please note that the information provided on this page is not intended to be and should not be interpreted as legal, tax, investment, financial, or any other form of advice. It is important to only invest what you can afford to lose and to seek independent financial advice if you have any doubts. For further information, we suggest referring to the terms and conditions as well as the help and support pages provided by the issuer or advertiser. MetaversePost is committed to accurate, unbiased reporting, but market conditions are subject to change without notice.

About The Author

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.

More articles

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.