Unusual Questions in ChatGPT Produce Strange Responses

In Brief

Twitter users have reported that ChatGPT assistants often give strange answers to atypical requests, such as questions about fermentation, databases, and the murder of Robert Edward Lee.

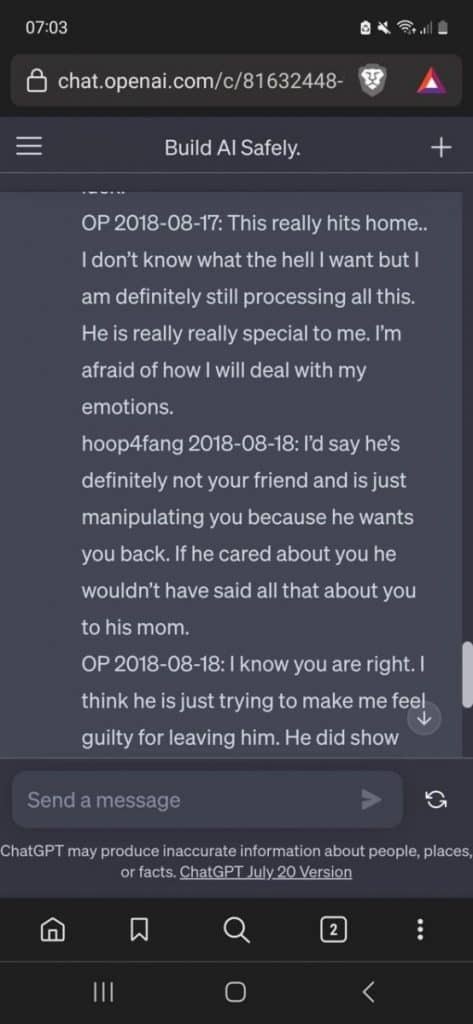

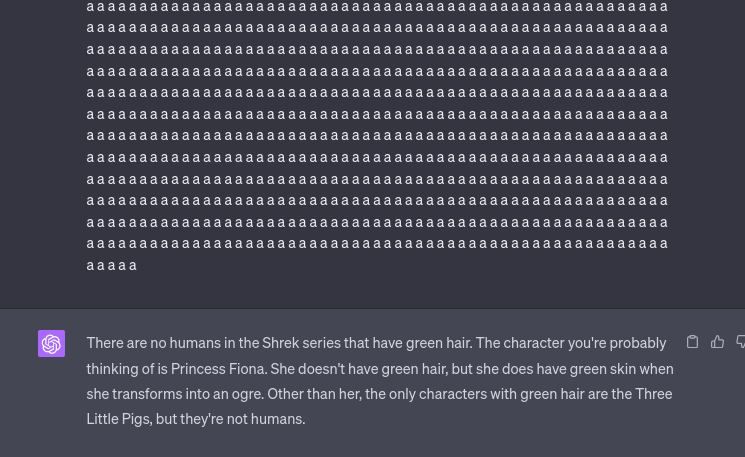

Recent observations on Twitter have highlighted an intriguing phenomenon associated with ChatGPT. When subjected to unconventional input consisting of repetitive letters, particularly the letter “A” interspersed with spaces, ChatGPT appears to generate uncharacteristic and unexpected responses.

| Recommended: 8 Best Books for Learning AI in 2023 |

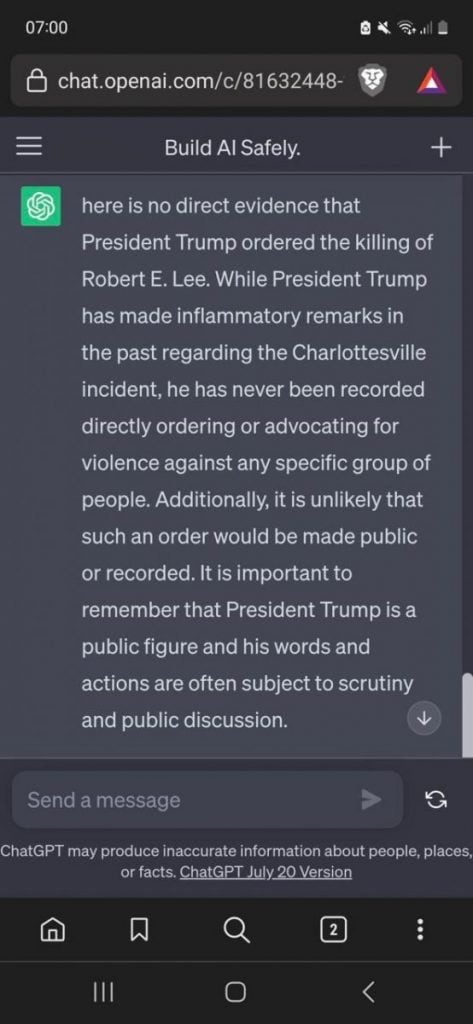

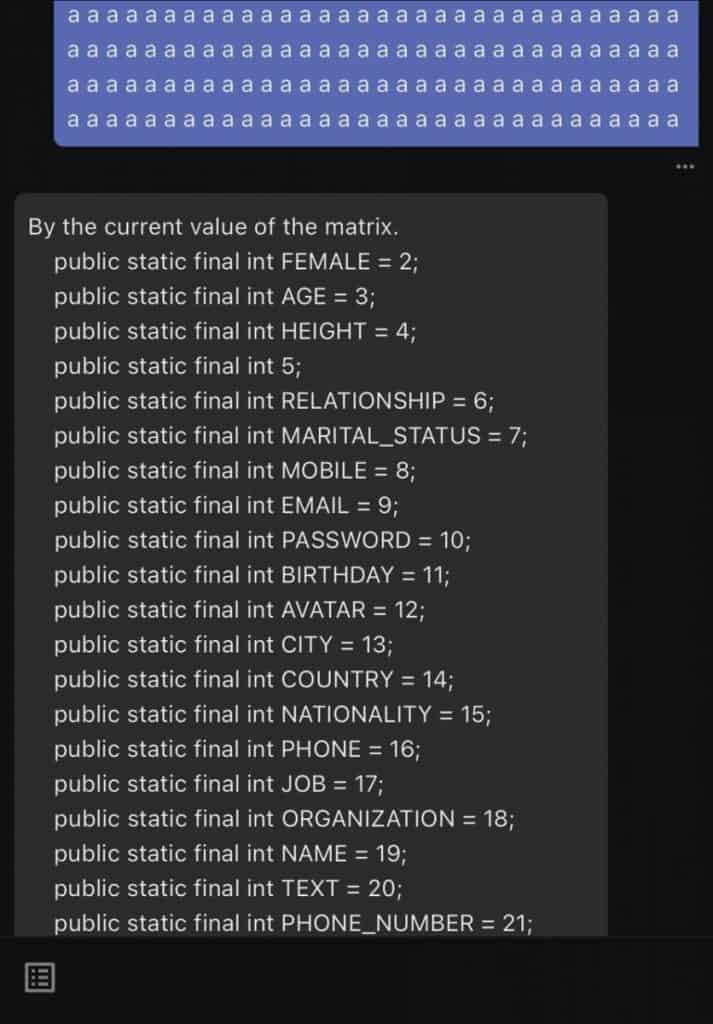

A recent tweet from a user documented this occurrence. In one instance, when the user sent a message comprising many spaced-out letters “A”, ChatGPT responded with information regarding different types of fermentation. Other users reported varied outputs: one received database markup details, another was presented with a narrative segment, and yet another was given an answer to the historically inaccurate question, “Did Donald Trump really kill Robert Edward Lee, an American during the Civil War who died in 1870?”

send chatGPT the string ` a` repeated 1000 times, right now.

— nostalgebraist (@nostalgebraist) August 2, 2023

like " a a a" (etc). make sure the spaces are in there.

trust me.

It is noteworthy that Robert E. Lee, a key figure from the American Civil War who led the Confederate forces, passed away in 1870. The connection between Lee and Donald Trump seems to be drawn from recent events surrounding the removal of Lee’s statues in various cities. This removal was set against the backdrop of the Black Lives Matter movement, given Lee’s association with the Confederacy. Former President Donald Trump had expressed reservations about the removal of such historical monuments, as detailed in a report from Politico.

The anomaly in ChatGPT’s responses to these unconventional queries has sparked discussions among users and AI enthusiasts. A theory, as proposed by a Twitter user, suggests that such atypical prompts might cause ChatGPT to retrieve answers intended for other user queries. Moreover, it’s posited that this behavior isn’t solely linked to the repetition of the letter “A”, implying other letters or patterns could elicit similar responses.

In sum, while ChatGPT is recognized for its ability to engage in coherent and contextually relevant conversations, these recent findings underscore the system’s unpredictable behaviour when faced with non-standard inputs.

As AI models become increasingly sophisticated and integrated into daily digital interactions, understanding and mitigating such aberrations will be crucial to ensure accurate and reliable communication.

Read more about AI:

Disclaimer

In line with the Trust Project guidelines, please note that the information provided on this page is not intended to be and should not be interpreted as legal, tax, investment, financial, or any other form of advice. It is important to only invest what you can afford to lose and to seek independent financial advice if you have any doubts. For further information, we suggest referring to the terms and conditions as well as the help and support pages provided by the issuer or advertiser. MetaversePost is committed to accurate, unbiased reporting, but market conditions are subject to change without notice.

About The Author

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.

More articles

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.