Ritsumeikan University Researchers Develop AI Network to Enhance Autonomous Vehicle Safety

In Brief

Ritsumeikan University researchers unveil a 3D object detection network DPPFA−Net to boost accuracy for robots and autonomous vehicles.

Researchers at Japan’s Ritsumeikan University unveil Dynamic Point-Pixel Feature Alignment Network (DPPFA−Net), a 3D object detection network, combining LiDAR and image data to boost accuracy for robots and self-driving cars, addressing challenges in adverse weather and occlusion.

In the rapidly evolving landscape of robotics and autonomous vehicles, the accurate perception of surroundings is vital for ensuring safety and efficiency. Traditional 3D object detection methods primarily utilize LiDAR sensors to generate 3D point clouds, but these methods face challenges, especially in adverse weather conditions like rainfall, where LiDAR’s sensitivity to noise becomes a limiting factor.

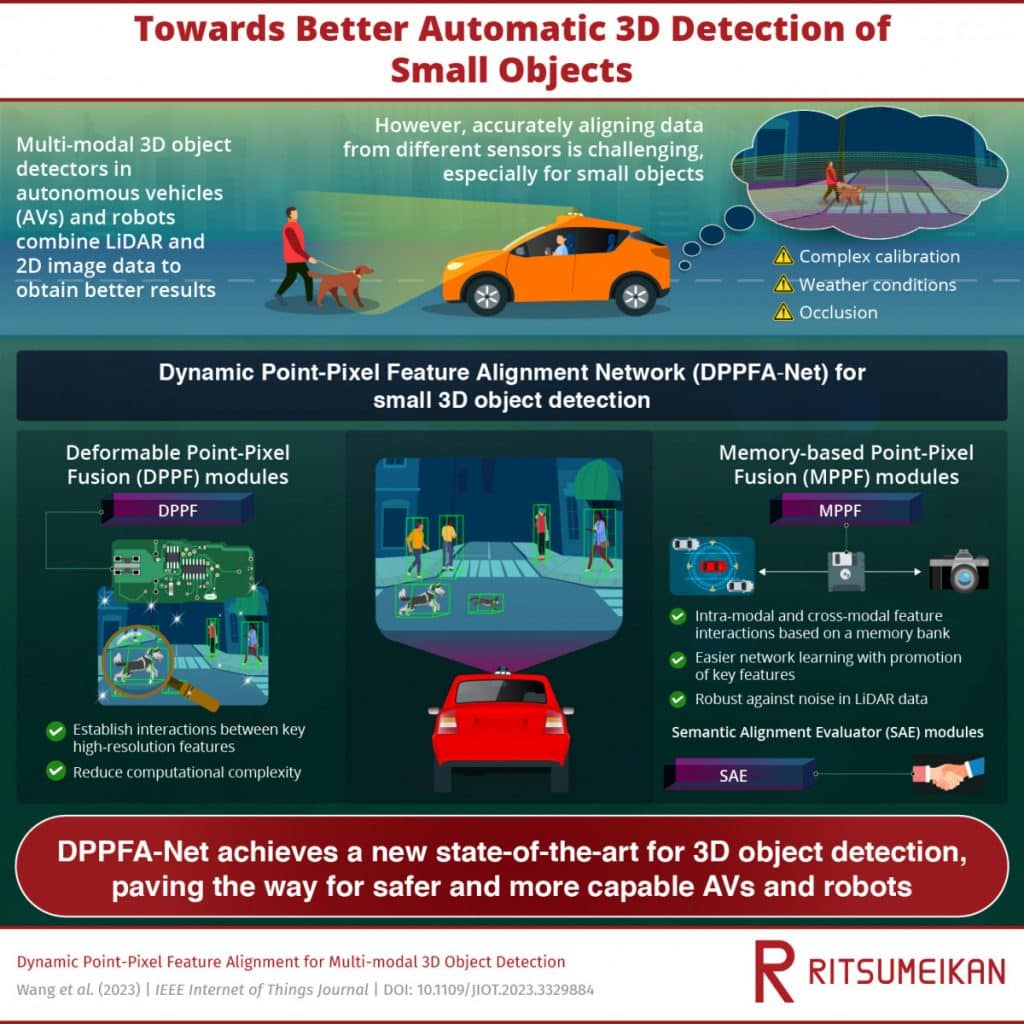

To address these limitations, the research team adopted a multi-modal approach, combining 3D LiDAR data with 2D RGB images captured by standard cameras. While this fusion enhances 3D detection results, accurately aligning information from both data sets remains a persistent challenge, particularly for detecting small objects.

Led by Professor Hiroyuki Tomiyama, the team developed DPPFA−Net, a novel network that introduces three key modules – the Memory-based Point-Pixel Fusion (MPPF) module, the Deformable Point-Pixel Fusion (DPPF) module, and the Semantic Alignment Evaluator (SAE) module.

These modules collectively address challenges related to feature interactions, high-resolution fusion, and semantic alignment during the fusion process.

According to the team, the DPPFA−Net overcomes challenges associated with adverse weather conditions and occlusion, potentially paving the way for more perceptive and capable autonomous systems.

The researchers tested DPPFA−Net against top-performing models using the widely recognized KITTI Vision Benchmark. The proposed network exhibited average precision improvements of up to 7.18% under various noise conditions. To further challenge the model, the team introduced a new noisy dataset with artificial multi-modal noise, simulating rainfall in adverse weather conditions. The team mentioned that the results demonstrated DPPFA−Net’s superiority not only under severe occlusions but also in diverse adverse weather scenarios.

Real-World Implications

The potential applications of accurate 3D object detection extend beyond autonomous vehicles. Self-driving cars, relying on such technologies, stand to benefit from reduced accidents and improved traffic flow. Additionally, the advancements in robotics could lead to better adaptation of robots to their working environments, allowing for more precise perception of small targets.

Moreover, the study suggests that accurate 3D object detection networks could significantly reduce the cost of manual annotation in deep-learning perception systems. This reduction in costs could accelerate developments in the field, contributing to the broader advancement of AI technologies.

The research not only addresses current challenges in 3D object detection but also opens doors to further innovations that could redefine the landscape of AI and autonomous technologies. As we move closer to a future where robots and self-driving cars navigate complex environments, breakthroughs like DPPFA−Net play a pivotal role in shaping the technological narrative.

Disclaimer

In line with the Trust Project guidelines, please note that the information provided on this page is not intended to be and should not be interpreted as legal, tax, investment, financial, or any other form of advice. It is important to only invest what you can afford to lose and to seek independent financial advice if you have any doubts. For further information, we suggest referring to the terms and conditions as well as the help and support pages provided by the issuer or advertiser. MetaversePost is committed to accurate, unbiased reporting, but market conditions are subject to change without notice.

About The Author

Kumar is an experienced Tech Journalist with a specialization in the dynamic intersections of AI/ML, marketing technology, and emerging fields such as crypto, blockchain, and NFTs. With over 3 years of experience in the industry, Kumar has established a proven track record in crafting compelling narratives, conducting insightful interviews, and delivering comprehensive insights. Kumar's expertise lies in producing high-impact content, including articles, reports, and research publications for prominent industry platforms. With a unique skill set that combines technical knowledge and storytelling, Kumar excels at communicating complex technological concepts to diverse audiences in a clear and engaging manner.

More articles

Kumar is an experienced Tech Journalist with a specialization in the dynamic intersections of AI/ML, marketing technology, and emerging fields such as crypto, blockchain, and NFTs. With over 3 years of experience in the industry, Kumar has established a proven track record in crafting compelling narratives, conducting insightful interviews, and delivering comprehensive insights. Kumar's expertise lies in producing high-impact content, including articles, reports, and research publications for prominent industry platforms. With a unique skill set that combines technical knowledge and storytelling, Kumar excels at communicating complex technological concepts to diverse audiences in a clear and engaging manner.