New AI Language Model Falcon 180B on Par with GPT-4 and Google’s PaLM 2

In Brief

The Falcon 180B, an AI language model with 180 billion parameters, has set a new standard in the field.

Trained on 3.5 trillion tokens, it outperforms competitors like Meta’s LLaMA 2.

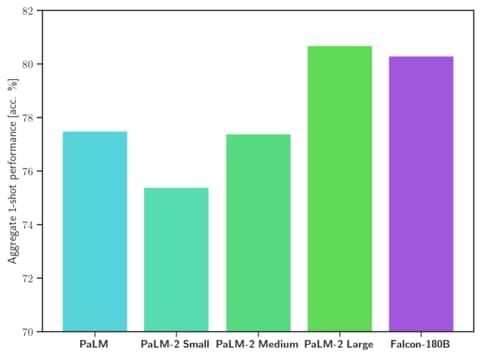

The Falcon 180B has emerged as a formidable contender with its massive 180 billion parameters, setting a new standard in the field. Trained on a staggering 3.5 trillion tokens, this powerhouse demonstrates exceptional prowess across an array of tasks, including reasoning, coding, and knowledge assessments. Falcon 180B not only competes with but often outperforms competitors, including Meta’s LLaMA 2.

Falcon 180B stands shoulder to shoulder with OpenAI’s GPT-4 and performs at par with Google’s PaLM 2 Large. This achievement underscores the immense potential of this language model.

To access detailed information about Falcon 180B, visit this link, where you can also find a demo and the user agreement.

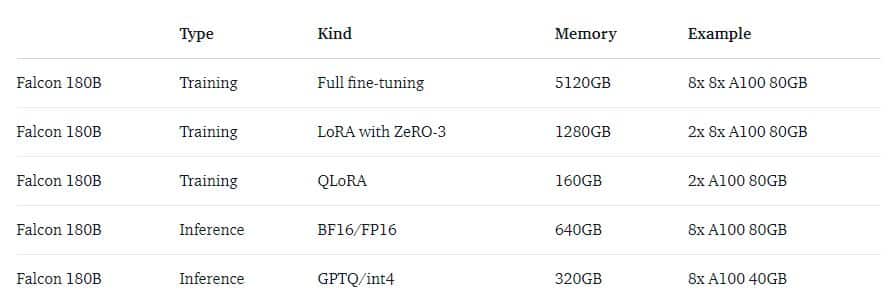

It’s important to note that Falcon 180B’s computational demands are substantial, and it may not be accommodated by standard hardware. The community eagerly anticipates compatible hardware solutions, such as the rumored NVIDIA GH200 Grace Hopper, boasting an impressive 282 GB of VRAM. This formidable hardware announcement was made at SIGGRAPH 2023.

Key Highlights of NVIDIA GH200 Grace Hopper

- The GH200 Grace Hopper is poised to revolutionize the field of Generative AI, featuring not only a powerful GPU but also an integrated ARM processor.

- The base version comes equipped with 141 GB of VRAM and 72 ARM Neoverse cores, complemented by 480 GB of LPDDR5X RAM.

- The NVIDIA NVLink technology allows for the combination of dual “superchips,” resulting in a staggering 480×2 GB of high-speed memory (RAM).

- The dual-chip configuration offers a remarkable 282 GB of VRAM, supported by 144 ARM Neoverse cores and an impressive 7.9 PFLOPS int8 performance, equivalent to the dual H100 NVL.

- Notably, the new HBM3e memory technology boasts a 50% increase in speed compared to its predecessor, HBM3, delivering a remarkable 10 TB/s of combined bandwidth.

This hardware innovation promises to unlock new horizons in AI research and applications, enabling more complex and data-intensive tasks to be undertaken seamlessly.

Read more about Ai:

Disclaimer

In line with the Trust Project guidelines, please note that the information provided on this page is not intended to be and should not be interpreted as legal, tax, investment, financial, or any other form of advice. It is important to only invest what you can afford to lose and to seek independent financial advice if you have any doubts. For further information, we suggest referring to the terms and conditions as well as the help and support pages provided by the issuer or advertiser. MetaversePost is committed to accurate, unbiased reporting, but market conditions are subject to change without notice.

About The Author

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.

More articles

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.