Meta Unveils ‘Emu’ to Improve AI Image Generation

In Brief

Meta AI has developed a method to improve image generation models using photogenic needles in a haystack.

The process involves pre-training a diffusion model on a vast dataset, using text encoders to achieve a resolution of 1024×1024 pixels.

The dataset undergoes extensive filtering, with human expertise weeding out subpar images.

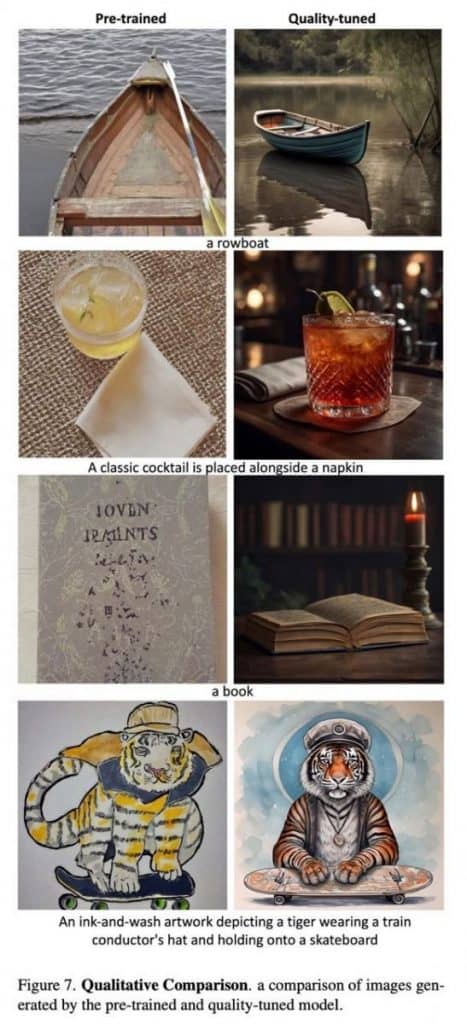

Meta AI recently shared its research paper detailing a novel approach developed to enhance the generation of stickers and images within its services. The paper, titled “Emu: Enhancing Image Generation Models Using Photogenic Needles in a Haystack,” aims to demonstrate how a “quality-tuned” training method can significantly elevate the quality of image generation — even on a small dataset.

Meta’s Pre-Training Method and Model Details

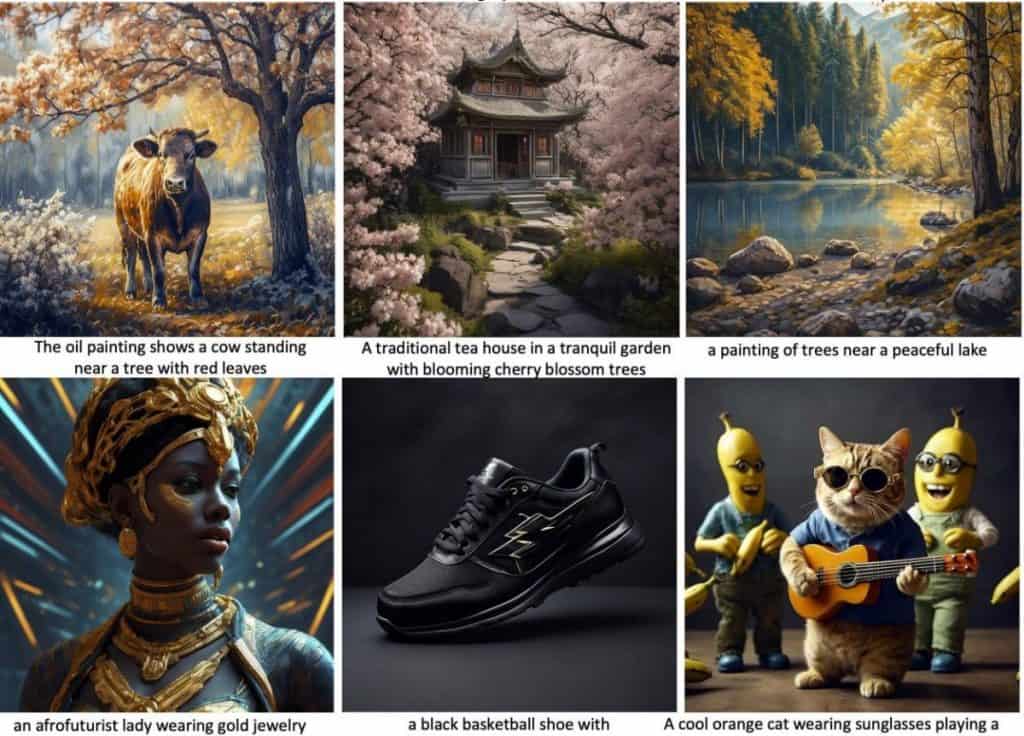

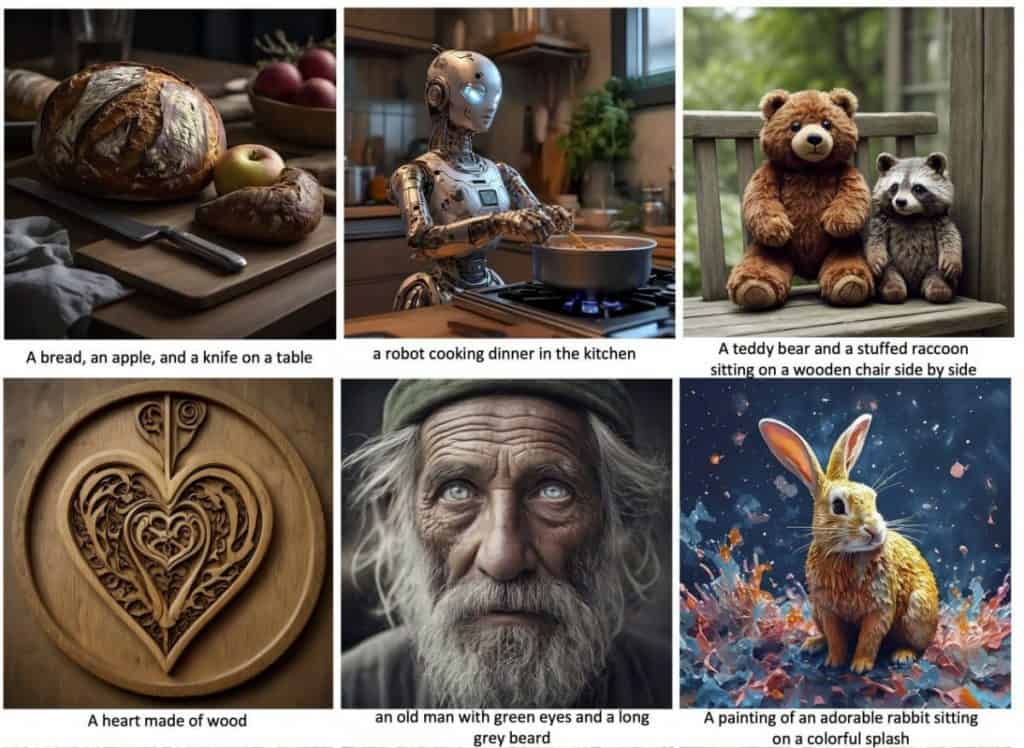

The initial stage involves pre-training a diffusion model using a vast dataset comprising 1.1 billion image-text pairs from Meta AI’s internal resources. The phase relies on a U-Net model with a hefty 2.8 billion parameters. Text encoders, specifically CLIP ViT-L and T5-XXL, are used in conjunction with the model. The ultimate goal of the model is to generate an image, 1024×1024 pixels in resolution.

The model’s dataset undergoes rigorous filtering, eliminating more than 200,000 samples from a pool of over a billion examples. Multiple filters, including classifiers assessing image aesthetics, mechanisms for discarding undesirable content, optical character recognition (OCR) for excluding text-heavy images, and resolution and proportion-based filtering, are applied. Popularity metrics, such as likes, also influence the filtration process.

In this phase, human expertise takes center stage. Generalists, individuals possessing a comprehensive grasp of data annotation, assess the remaining 200,000 images and assemble a subset of 20,000. The primary objective here is to identify and remove significantly subpar images in case the heuristics employed in the preceding step prove inadequate.

Emu’s Image Generation Prowess

A team of photography specialists, highly knowledgeable in photographic principles, takes on the task of filtering and selecting images. Their goal is to identify and preserve images with the highest aesthetic quality. They meticulously consider factors such as composition, lighting, color schemes, contrasts, thematic relevance, and backgrounds.

The final touch includes the meticulous crafting of high-quality text annotations for this curated dataset of 2,000 image-text pairs.

Lastly, the model trains on this refined dataset, completing 15,000 steps with a batch size of 64. This batch size is relatively small compared to large generative models. While the model may appear overtrained based on validation loss, human evaluations indicate otherwise. A similar phenomenon has been observed in language models.

Through this orchestrated multi-stage process, Meta AI achieves high-quality image generation. This methodology not only aims to enhance the practical benefits of their services but also underscores the significance of careful curation and human expertise in refining AI-generated content. For further details, you can explore the complete article.

Read more related topics:

Disclaimer

In line with the Trust Project guidelines, please note that the information provided on this page is not intended to be and should not be interpreted as legal, tax, investment, financial, or any other form of advice. It is important to only invest what you can afford to lose and to seek independent financial advice if you have any doubts. For further information, we suggest referring to the terms and conditions as well as the help and support pages provided by the issuer or advertiser. MetaversePost is committed to accurate, unbiased reporting, but market conditions are subject to change without notice.

About The Author

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.

More articles

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.