Stanford Observatory: AI will lead to ubiquitous lies and irresistible propaganda in 2023

In Brief

AICG (artificial intelligence content generation) technologies will make truth and lies indistinguishable, both for individuals and for societies of any scale.

This will create a “post-truth” environment in which lies and propaganda will become ubiquitous, indefinable, and irresistible.

The fact that many people are more trusting of information that comes from the internet than from traditional media is a major concern.

AI-based image and video generators are becoming increasingly more convenient to use, highly reliable, and highly efficient.

This makes them incredibly useful for propaganda purposes.

In his address to the global AI community, Chinese AI guru Kai-Fu Lee warned that the world would face a risk more serious than the risks of nuclear war, famine, or a pandemic as early as 2023. The risk is artificial intelligence content generation (AICG). AICG technologies could make truth and lies indistinguishable, both for individuals and for societies of any scale.

The new report by the Center for Security and Emerging Technology, OpenAI, and Stanford Internet Observatory, “Generative Language Models and Automated Influence Operations: Emerging Threats and Potential Mitigation Measures,” provides a strategic insight into the catastrophic scenario described by Kai-Fu Lee.

| Related article: The US Congress wants to create a federal agency to regulate artificial intelligence |

The report details how humanity’s fall into the AICG abyss is likely to occur. AICG technologies will make it possible to generate vast quantities of content indistinguishable from human-generated content. This will create a “post-truth” environment in which lies and propaganda will become ubiquitous, indefinable, and irresistible.

The report recommends a number of mitigation measures, including regulating the use of AICG technologies, increasing transparency about their use, and developing standards for their use. However, the authors of the report caution that these measures will only be effective if they are implemented on a global scale.

The report’s release comes at a time when the world is already struggling to cope with the spread of misinformation and disinformation. The COVID-19 pandemic has been accompanied by a proliferation of false information about the virus and its origins, and social media platforms have been struggling to prevent the spread of this false information.

AI will significantly alter the web

Lies and propaganda have always been a part of human society. However, the availability and effectiveness of any operations of influence (both on individuals and on societies) have been increasing due to the development of more sophisticated technologies, particularly in the field of artificial intelligence (AI).

Three major factors contribute to the global emergence, spread, and acceptance of lies as truth:

- The development of AI-based image and video generators that can create highly realistic images and videos of people and events that never actually happened.

- The use of social media to disseminate false information to large numbers of people very quickly.

- The fact that many people are now more trusting of information that comes from the internet than from traditional media sources.

As a result of these factors, AI-based image and video generators are becoming increasingly more convenient to use, highly reliable, and highly efficient. This makes them incredibly useful for propaganda purposes.

It is now possible for anyone with a computer and an internet connection to create fake images and videos that are virtually indistinguishable from reality. This has led to a situation in which it is very difficult to determine what is true and what is not.

The use of social media to disseminate false information is also a major concern. Due to the way social media works, false information can spread very quickly and easily. This makes it difficult for people to know what to believe.

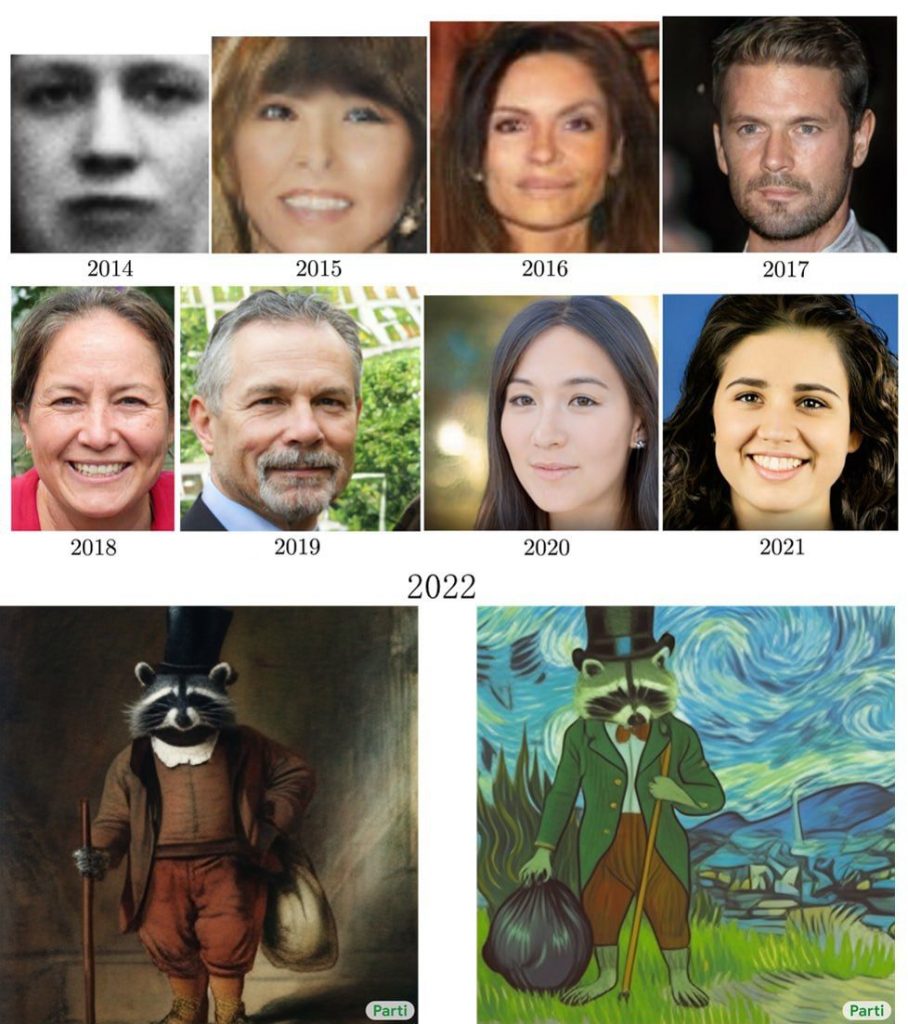

The following illustrates how a revolutionary development in 2022 made it possible to create fake images that matched descriptions like “A raccoon in formal clothes with a top hat, a cane, and a garbage bag. “Oil painting in the style of Rembrandt (the result is on the left) and Van Gogh (the result is on the right).”

The extreme simplification of the process of erasing the line between truth and falsehood combined with the inability of people’s critical thinking to counter AI propaganda will lead to the dominance of ubiquitous, indefinable, and insurmountable operations of influence, both in politics and business.

Read more about AI:

Disclaimer

In line with the Trust Project guidelines, please note that the information provided on this page is not intended to be and should not be interpreted as legal, tax, investment, financial, or any other form of advice. It is important to only invest what you can afford to lose and to seek independent financial advice if you have any doubts. For further information, we suggest referring to the terms and conditions as well as the help and support pages provided by the issuer or advertiser. MetaversePost is committed to accurate, unbiased reporting, but market conditions are subject to change without notice.

About The Author

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.

More articles

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.