I-JEPA: The Next Breakthrough in AI, Bringing Us Closer to AGI

In Brief

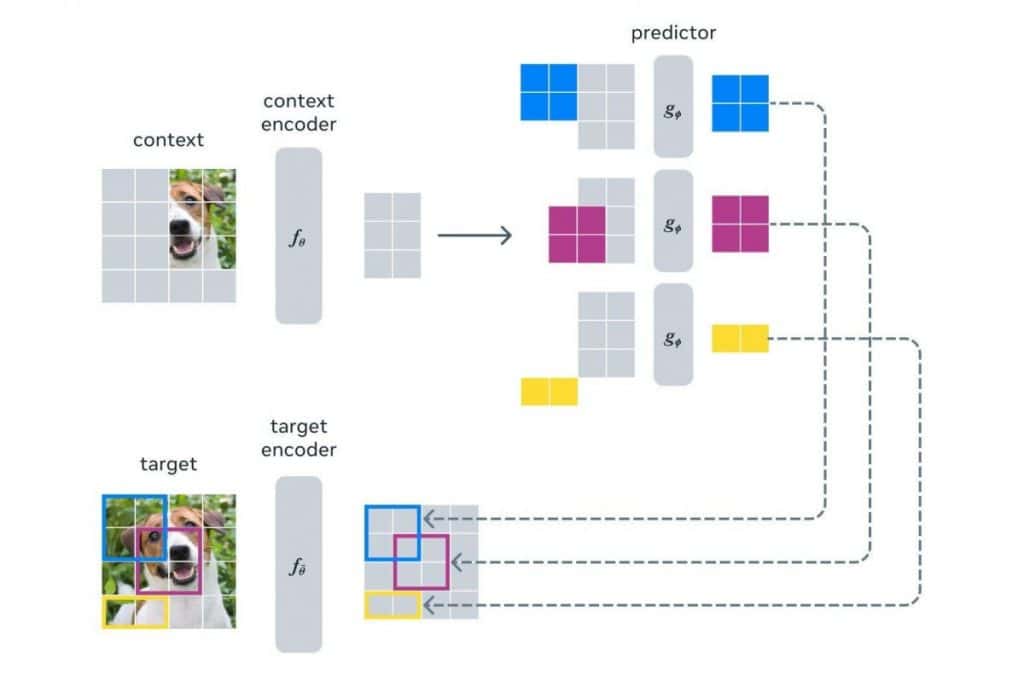

I-JEPA is a method for self-supervised learning for image understanding, allowing it to learn semantic features without relying on predefined invariances or pixel-level details.

It also offers computational efficiency, making it a practical and efficient solution.

Yann LeCun and his team at Meta have unveiled a new AI architecture called I-JEPA. This innovative model aims to improve the field of artificial intelligence by understanding abstract meanings and the complexities of our world. The goal? To accelerate learning, future planning, and adaptation to new environments.

The traditional approach to AI, known as GenML, has faced criticism from LeCun, who believes it falls short in achieving true artificial general intelligence (AGI). With I-JEPA, Meta is charting a different course, focusing on vision as the key pathway to AGI rather than language.

Unlike traditional methods that heavily rely on hand-crafted data transformations, I-JEPA breaks free from biases and limitations. By not relying on pre-specified invariances, it avoids being biased towards specific tasks. Similarly, it skips the need for filling in pixel-level details, resulting in more meaningful and semantically rich representations.

One of the distinguishing features of I-JEPA is its predictive power. Rather than having a pixel decoder, it employs a predictor that operates in latent space. This predictor can be seen as a primitive world-model, capable of capturing spatial uncertainty within a static image. It predicts high-level information about unseen regions in the image, focusing on semantics rather than pixel-level specifics.

To demonstrate its capabilities, the I-JEPA team trained a stochastic decoder that maps the predicted representations back into pixel space as sketches. The results were remarkable, capturing positional uncertainty and generating accurate high-level object parts with correct poses, such as a dog’s head or a wolf’s front legs.

Not only is I-JEPA a powerful method for semantic image understanding, but it also offers computational efficiency. Unlike other approaches that require multiple views or computationally intensive data augmentations, I-JEPA achieves strong off-the-shelf semantic representations using only a single view of the image. This makes it a practical and efficient solution.

The I-JEPA project represents a significant milestone in the field of self-supervised learning for image understanding. Its ability to learn semantics without relying on biases and pixel-level details opens up new possibilities for AI research and applications.

Now, as the AI community eagerly awaits further developments, the I-JEPA method is set to unlock the potential of self-supervised learning and pave the way for even more transformative advancements in the field. The initial strides have already been made, with I-JEPA trained to comprehend the “big picture” in images rather than predicting every individual pixel. Meta’s bold vision has led them to open source the code and checkpoints, inviting participation from developers and enthusiasts.

Excitement is building as the AI community eagerly anticipates the presentation of I-JEPA at an upcoming AI conference. Could this be the new frontier in AI development?

Stay tuned for updates as I-JEPA shapes the future of artificial intelligence, promising to bridge the gap between current AI capabilities and the dream of AGI.

Read more about AI:

Disclaimer

In line with the Trust Project guidelines, please note that the information provided on this page is not intended to be and should not be interpreted as legal, tax, investment, financial, or any other form of advice. It is important to only invest what you can afford to lose and to seek independent financial advice if you have any doubts. For further information, we suggest referring to the terms and conditions as well as the help and support pages provided by the issuer or advertiser. MetaversePost is committed to accurate, unbiased reporting, but market conditions are subject to change without notice.

About The Author

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.

More articles

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.