Stanford Study Reveals Alarming Lack of Transparency in AI Foundation Models

In Brief

Stanford researchers uncovered declining transparency in AI foundation models and call for data disclosure.

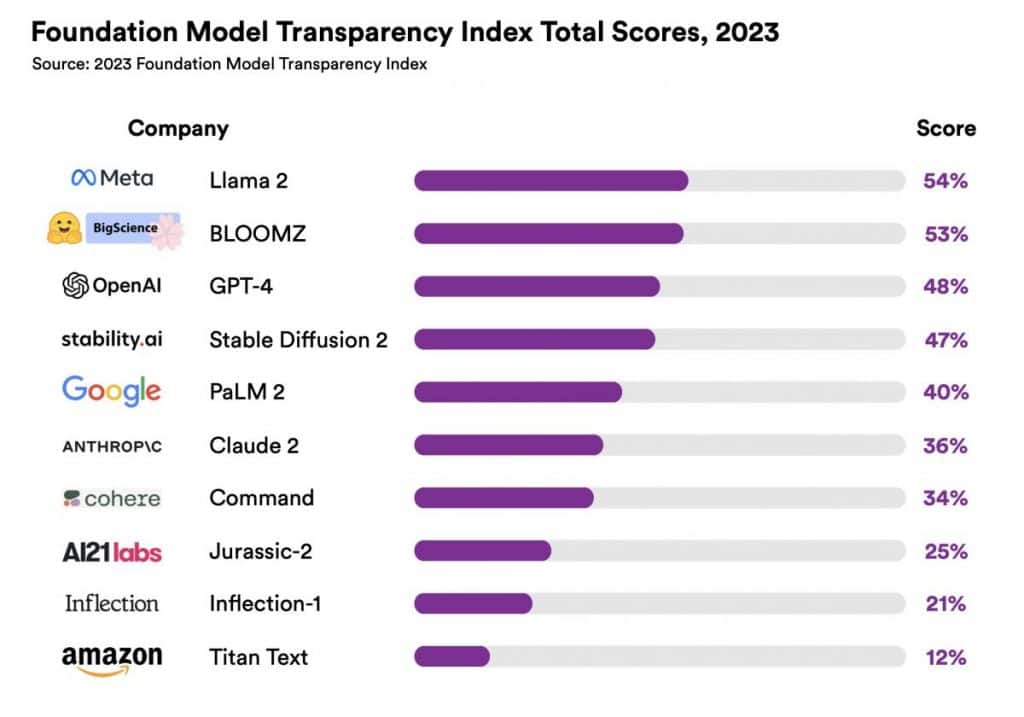

Among the 10 models assessed, LLaMA 2 was deemed the most transparent with a score of 54%, followed by GPT-4 with a transparency score of 48%. Amazon’s Titan scored the lowest with only 12% transparency.

Stanford University researchers have unveiled a report, the Foundation Model Transparency Index, which evaluates the transparency of AI foundation models created by companies like OpenAI and Google.

The study raises concerns over declining transparency and highlights the need for companies to divulge information about data sources and human labor in model training, emphasizing the potential risks of opacity in the AI industry.

“It is clear over the last three years that transparency is on the decline while capability is going through the roof. We view this as highly problematic because we’ve seen in other areas like social media that when transparency goes down, bad things can happen as a result,” said Stanford professor Percy Liang, who worked on the Foundation Model Transparency Index.

Foundation models, which are pivotal in generative AI and automation advancements, have been graded poorly in the Foundation Model Transparency Index, with even the most transparent model, Meta’s Llama 2, scoring just 54 out of 100.

The lowest-ranked model was Amazon’s Titan, with a mere 12 out of 100. OpenAI’s GPT-4 model scored 48 out of 100. Meanwhile, PaLM 2 was rated with 40.

The report’s authors hope that this study will serve as a catalyst for increased transparency in the AI field and act as a foundational reference point for governments as they grapple with the regulatory challenges of this burgeoning technology.

The European Union recently made progress towards enacting the world’s first comprehensive set of regulations for AI with the “Artificial Intelligence Act.” Under the proposed rules, AI tools will be categorized by their risk levels, addressing concerns about biometric surveillance, dissemination of misleading information, and discriminatory language. Companies in EU utilizing generative AI tools like ChatGPT must disclose any copyrighted material used in their development, promoting transparency.

Disclaimer

In line with the Trust Project guidelines, please note that the information provided on this page is not intended to be and should not be interpreted as legal, tax, investment, financial, or any other form of advice. It is important to only invest what you can afford to lose and to seek independent financial advice if you have any doubts. For further information, we suggest referring to the terms and conditions as well as the help and support pages provided by the issuer or advertiser. MetaversePost is committed to accurate, unbiased reporting, but market conditions are subject to change without notice.

About The Author

Agne is a journalist who covers the latest trends and developments in the metaverse, AI, and Web3 industries for the Metaverse Post. Her passion for storytelling has led her to conduct numerous interviews with experts in these fields, always seeking to uncover exciting and engaging stories. Agne holds a Bachelor’s degree in literature and has an extensive background in writing about a wide range of topics including travel, art, and culture. She has also volunteered as an editor for the animal rights organization, where she helped raise awareness about animal welfare issues. Contact her on [email protected].

More articles

Agne is a journalist who covers the latest trends and developments in the metaverse, AI, and Web3 industries for the Metaverse Post. Her passion for storytelling has led her to conduct numerous interviews with experts in these fields, always seeking to uncover exciting and engaging stories. Agne holds a Bachelor’s degree in literature and has an extensive background in writing about a wide range of topics including travel, art, and culture. She has also volunteered as an editor for the animal rights organization, where she helped raise awareness about animal welfare issues. Contact her on [email protected].