Microsoft Has Introduced Multimodal Language Model Otter for Visual Understanding Based on the Massive Instructional Visual-Text Dataset MIMIC-IT

In Brief

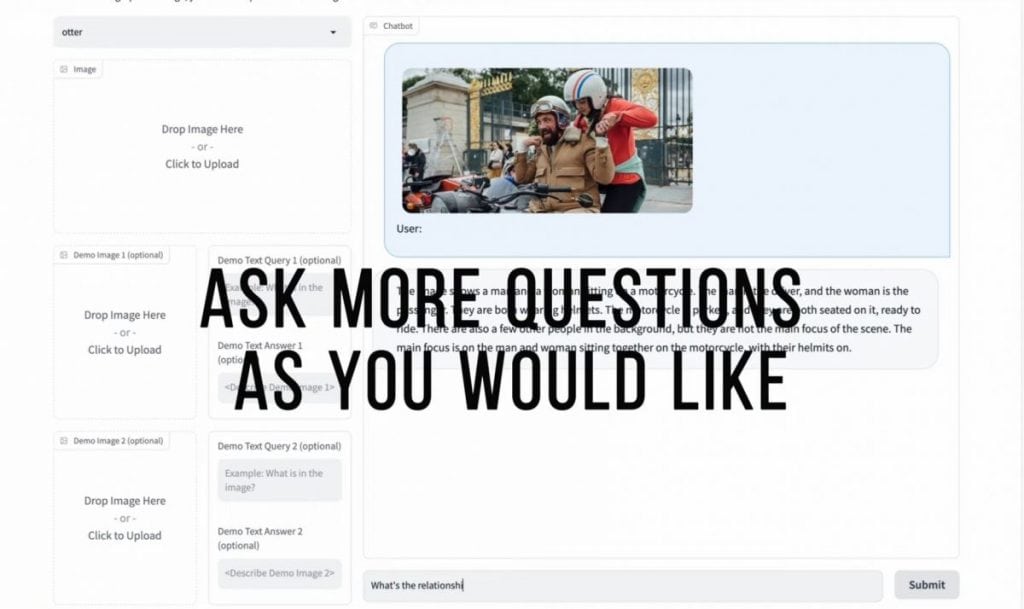

Otter is a visual language model (VLM) built on the OpenFlamingo platform, designed to revolutionize visual understanding and interact with visual content.

Otter is a cutting-edge visual language model (VLM) built on the OpenFlamingo platform, and it is set to improve the way we interact with visual content. As part of the ambitious Otter project, Microsoft has introduced a massive instructive visual-text dataset called MIMIC-IT. This dataset contains a staggering 2.8 million pairs of linked multimodal instructions with answers, including 2.2 million unique instructions derived from images and videos. The dataset was meticulously curated to simulate natural dialogues, covering scenarios such as image and video descriptions, image comparisons, question-answering, scene understanding, and more. These high-quality instruction-response pairs were generated using the powerful ChatGPT-0301 API, representing an investment of approximately $20k.

The MIMIC-IT dataset plays a crucial role in training the Otter model, which has been designed to excel in understanding visual scenes, reasoning, and logical conclusions. Each instruction-response pair in the dataset is accompanied by multi-modal in-context information, creating conversational contexts that empower the model to grasp the nuances of perception, reasoning, and planning. To scale the annotation process, Microsoft employed an automatic annotation pipeline named Syphus, which combines human expertise with the capabilities of GPT to ensure the dataset’s quality and diversity.

Using the MIMIC-IT dataset, Microsoft trained the Otter model, a large-scale VLM based on the OpenFlamingo platform. Through extensive evaluations on vision-language benchmarks, Otter has demonstrated remarkable proficiency in multi-modal perception, reasoning, and in-context learning. Human evaluations have revealed its ability to effectively align with the user’s intentions, making it an invaluable tool for interpreting and executing complex tasks based on natural language instructions.

Otter v0.2 has expanded its capabilities to support video inputs, allowing it to process frames and multiple images as in-context examples.

The release of the MIMIC-IT dataset, along with the instruction-response collection pipeline, benchmarks, and the Otter model, represents a significant milestone in the field of multimodal language processing. By making these resources available to researchers and developers, Microsoft aims to foster innovation and collaboration, enabling the integration of Otter and OpenFlamingo into customized training and inference pipelines using the popular Hugging Face Transformers framework.

The MIMIC-IT dataset encompasses a wide range of real-life scenarios, empowering Vision-Language Models (VLMs) to comprehend general scenes, reason about context, and intelligently differentiate between observations. This opens up possibilities, such as the development of egocentric visual assistant models that can answer questions like, “Hey, do you think I left my keys on the table?”.

MIMIC-IT is not limited to the English language. It also supports multiple languages, including Chinese, Korean, Japanese, German, French, Spanish, and Arabic. This multilingual support enables a larger global audience to benefit from the convenience and advancements brought about by AI.

To ensure the generation of high-quality instruction-response pairs, Microsoft has introduced Syphus, an automated pipeline that incorporates system messages, visual annotations, and in-context examples as prompts for ChatGPT. This ensures the reliability and accuracy of the generated instruction-response pairs across multiple languages.

Read more about AI:

Disclaimer

In line with the Trust Project guidelines, please note that the information provided on this page is not intended to be and should not be interpreted as legal, tax, investment, financial, or any other form of advice. It is important to only invest what you can afford to lose and to seek independent financial advice if you have any doubts. For further information, we suggest referring to the terms and conditions as well as the help and support pages provided by the issuer or advertiser. MetaversePost is committed to accurate, unbiased reporting, but market conditions are subject to change without notice.

About The Author

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.

More articles

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.