VALL-E X: The Most Dangerous Scammy AI Voice Cloning Tool Now Open Source

In Brief

Microsoft’s VALL-E X zero-shot TTS model has been released open source, allowing users to explore advanced text-to-speech synthesis and voice cloning.

The model supports fluent speech synthesis in English, Chinese, and Japanese, zero-shot voice cloning, speech emotion control, zero-shot cross-lingual speech synthesis, accent control, and acoustic environment adaptation.

VALL-E X operates seamlessly on both CPU and GPU, with a 6GB GPU VRAM required for optimal performance.

An open-source implementation of Microsoft’s VALL-E X zero-shot TTS model has been unveiled, allowing users to delve into the realms of advanced text-to-speech synthesis and voice cloning. This development comes as an expansion of Microsoft’s initial research paper, which lacked the code or pre-trained models necessary for hands-on exploration. With this release, the technology community gains access to a powerful tool for next-generation TTS capabilities.

VALL-E X is an exceptional multilingual text-to-speech model introduced by Microsoft. While the original research paper was informative, it lacked practical application due to the absence of code or pre-trained models. To bridge this gap, the dedicated team took on the challenge of reproducing the results and training our own VALL-E X model. The result of our endeavors is now available to the public, enabling a broader audience to experience the transformative potential of cutting-edge TTS technology.

VALL-E X is marked by several groundbreaking functionalities:

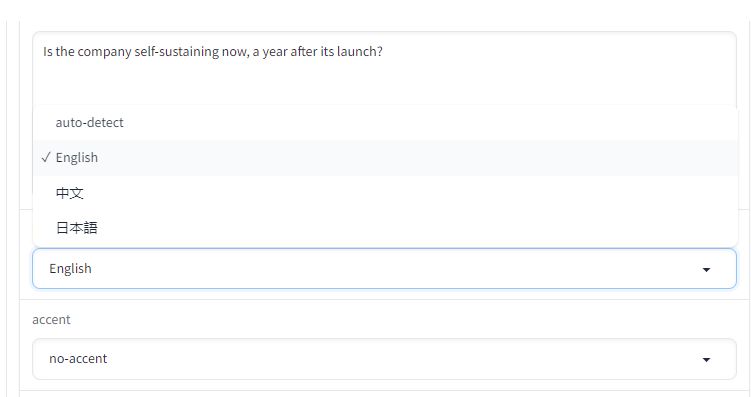

- Multilingual TTS: The model supports fluent speech synthesis in three languages: English, Chinese, and Japanese. Users can experience natural and expressive speech synthesis across these languages.

- Zero-shot Voice Cloning: By recording a short 3 to 10-second sample of an unfamiliar speaker’s voice, VALL-E X has the capacity to generate personalized, high-quality speech that mirrors the speaker’s unique vocal characteristics.

- Speech Emotion Control: VALL-E X can infuse synthesized speech with specific emotions, adding a layer of expressiveness to the audio output that aligns with the provided acoustic prompt.

- Zero-shot Cross-Lingual Speech Synthesis: The model can produce personalized speech in a different language while retaining fluency and accent, expanding the linguistic horizons of monolingual speakers.

- Accent Control: VALL-E X offers accent experimentation, allowing users to create content with diverse accents, such as speaking Chinese with an English accent and vice versa.

- Acoustic Environment Adaptation: The model accommodates varying audio prompts, adapting to the acoustic environment of the input to deliver a natural and immersive speech generation experience.

Moreover, VALL-E X extends its support to Chinese and Japanese languages, boasting exceptional performance across all three languages.

| Related: VALL-E: Microsoft’s new zero-shot text-to-speech model can duplicate everyone’s voice in three seconds |

The voice cloning capabilities of VALL-E X facilitate the creation of voice prompts using a person’s, character’s, or one’s own voice. A speech sample of 3 to 10 seconds, along with the transcript, is all that’s needed to craft a distinct voice prompt. A user-friendly graphical interface further simplifies interactions with VALL-E X, rendering voice cloning and multilingual speech synthesis an accessible endeavor.

Notably, VALL-E X operates seamlessly on both CPU and GPU (pytorch 2.0+, CUDA 11.7, and CUDA 12.0). The model’s efficient design ensures that a GPU VRAM of 6GB is sufficient for operation without offloading.

In comparison to the Bark model, VALL-E X offers several advantages:

- Lighter in weight, occupying only 3/4th of the space.

- Enhanced efficiency with a 4x speed boost.

- Superior quality in Chinese and Japanese languages.

- Cross-lingual speech synthesis without foreign accents.

- Easy voice cloning capabilities.

Regarding VRAM requirements, a 6GB GPU VRAM meets the criteria for running VALL-E X effectively. However, for longer text generation, the total length of the audio prompt and the generated audio must remain below 22 seconds to ensure optimal performance.

The open-source licensing of VALL-E X, governed by the MIT License, signifies a new era of accessibility and exploration in the realm of multilingual text-to-speech synthesis and voice cloning.

Read more about AI:

Disclaimer

In line with the Trust Project guidelines, please note that the information provided on this page is not intended to be and should not be interpreted as legal, tax, investment, financial, or any other form of advice. It is important to only invest what you can afford to lose and to seek independent financial advice if you have any doubts. For further information, we suggest referring to the terms and conditions as well as the help and support pages provided by the issuer or advertiser. MetaversePost is committed to accurate, unbiased reporting, but market conditions are subject to change without notice.

About The Author

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.

More articles

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.