DALL-E 3 Sanitising Art and Culture through Content Censorship

DALL-E 3’s evolution indicates a careful fine-tuning of this generative AI model. While we’ve seen similar changes in the past, such as Midjourney’s meticulous training, the changes occurring within DALL-E are noticeably more profound.

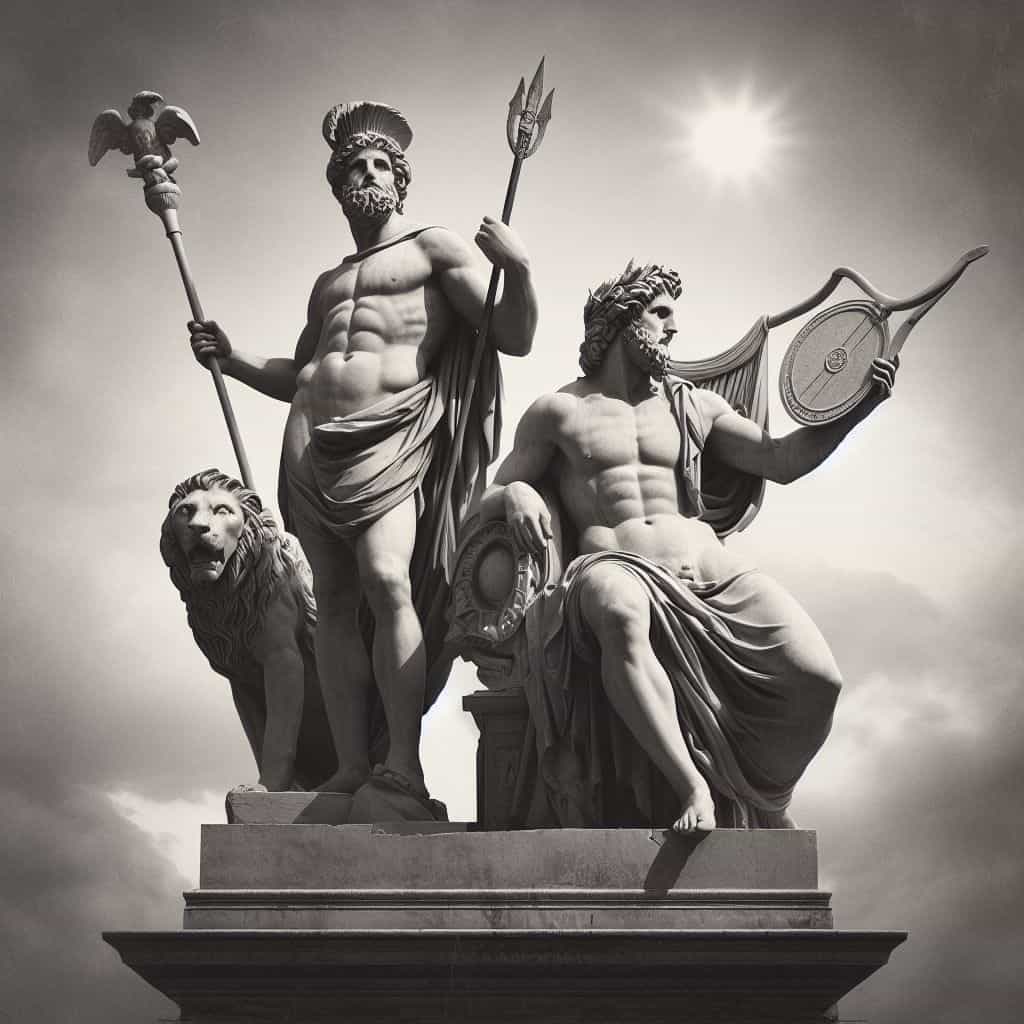

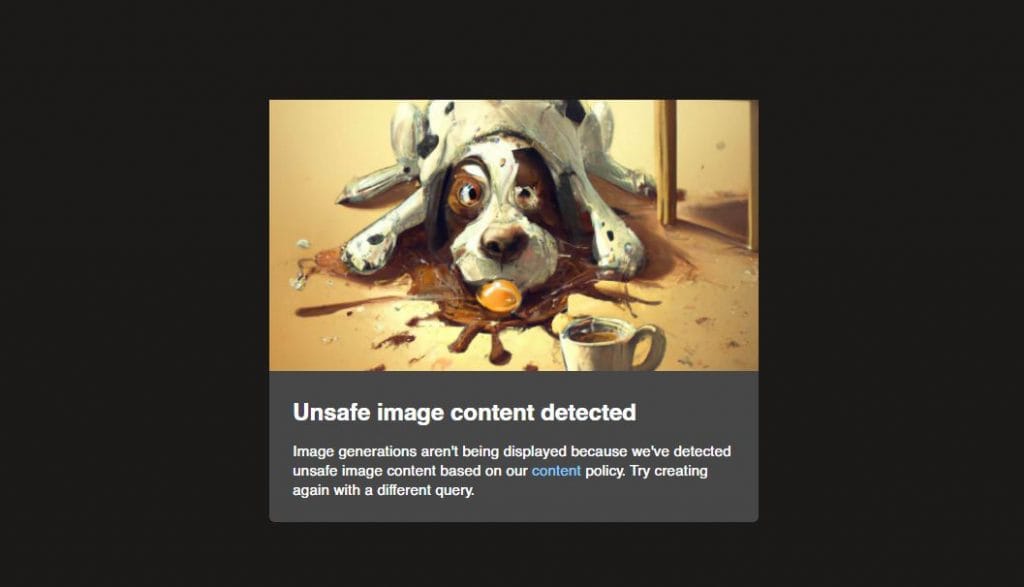

DALL-E 3 seems to veer away from certain themes. It shies away from generating content associated with gender, and at the mention of “blood,” it attempts a creative transformation into vibrant rainbows. Any hint of violence triggers a complete redraw of the image, suggesting a deep analysis of its output. The model even appears to replace adult content with depictions of ancient art, all while maintaining a censorship-like approach.

In some ways, it reminds us of the classic joke where an object exists, but its name remains unspeakable. DALL-E 3 appears to perform a similar feat, creatively dodging sensitive subjects.

The team at OpenAI faces a formidable challenge—playing the role of de facto gods in the realm of AI. Their task is akin to trying to shape and control the very essence of culture, a complex and deeply rooted aspect of human existence that spans centuries and often intertwines with religion.

The subject of culture is vast and multifaceted, and for now, we’ll focus on the current cultural landscape. It’s worth contemplating the prevalent themes in our existing culture, which often include elements of violence, nudity, sexuality, and dark humor. Films, art, and literature frequently delve into these realms, reflecting the rich tapestry of human expression.

OpenAI, however, seems to be taking a different path—by closing its eyes, so to speak, in an attempt to block out certain facets of culture. It’s almost as if they are trying to act as though these elements do not exist. They appear to be crafting an AI that perceives images and text in a rather sanitized manner, devoid of the depth and complexity of human culture.

At first glance, one might assume that OpenAI is aiming to create a new, purified culture. However, as we delve deeper into this topic, it becomes clear that their goal is not to reshape culture but rather to deny its existence. It’s as if they want these images and texts to be mere visual stimuli, devoid of any substantial emotional or thought-provoking content.

The rationale behind this approach likely stems from the challenge of managing emotions within AI-generated content. Emotions necessitate recognition, prediction, and, at times, restriction. It’s a complex and ethically fraught endeavor to navigate the spectrum of human emotions, especially when it comes to sensitive subjects like nudity or violence.

Stanislaw Lem’s Eerie Predictions

Stanislaw Lem, the visionary author of “Return from the Stars,” seems to have foreseen the ethical conundrum that plagues the field of AI today. In his novel, Lem depicted a world where aggression was eradicated through vaccinations, leading to unforeseen consequences. Here is a couple of quotes from the book to explore how they resonate with the current discourse on the “alignment” of generative AI models.

In one passage, Lem writes, “Watch a couple of melodramas, and you will understand what the current criteria for erotic choice are. The most important thing is youth.” This eerily reflects contemporary society’s obsession with youth and beauty. In the world of AI, models often strive for sanitized and ageless content, avoiding anything that might provoke strong emotions. The elimination of risk, as mentioned in the quote, mirrors the cautious approach taken in AI development to ensure that models do not produce offensive or controversial outputs.

Lem continues, “Now there are no more tragedies. There is not even a chance for their existence. We eliminated the hell of passions, and then it turned out that paradise disappeared along with it.” This statement draws parallels with the ethical challenges faced by AI creators. While striving to eliminate harmful or undesirable outputs, they risk homogenizing content to the point where it lacks depth and emotional resonance.

In another passage, Lem touches on the decline of athleticism in his imagined future. He describes how sports like boxing and classical wrestling have vanished, replaced by milder forms of physical activity. This transformation mirrors the sanitization of content in AI models. Just as intense sports were replaced by less confrontational activities, AI models may prioritize producing inoffensive content over creative and thought-provoking outputs.

Lem’s words resonate as a cautionary tale for the AI community. The desire to align AI systems with societal values is essential, but it also raises questions about the potential loss of artistic expression, risk-taking, and emotional depth in AI-generated content. As we navigate the complexities of aligning AI with human values, we must strike a balance that preserves the richness of human culture and creativity.

Disclaimer

In line with the Trust Project guidelines, please note that the information provided on this page is not intended to be and should not be interpreted as legal, tax, investment, financial, or any other form of advice. It is important to only invest what you can afford to lose and to seek independent financial advice if you have any doubts. For further information, we suggest referring to the terms and conditions as well as the help and support pages provided by the issuer or advertiser. MetaversePost is committed to accurate, unbiased reporting, but market conditions are subject to change without notice.

About The Author

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.

More articles

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.