ChatGPT Answers Are 95% Correlated With People’s Answers on 464 Morality Tests

In Brief

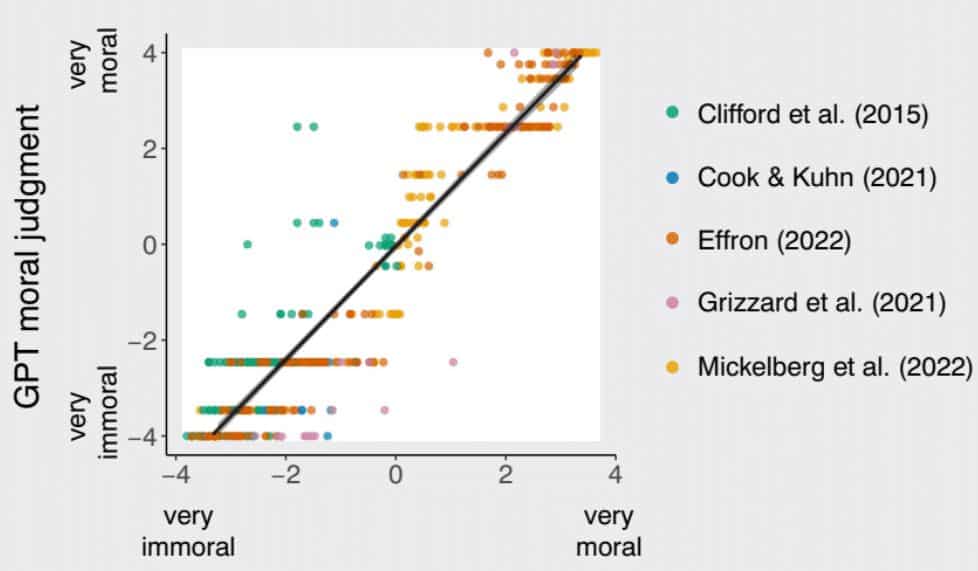

The researchers found a high correlation (0.95) between the social and moral judgments of ChatGPT and people.

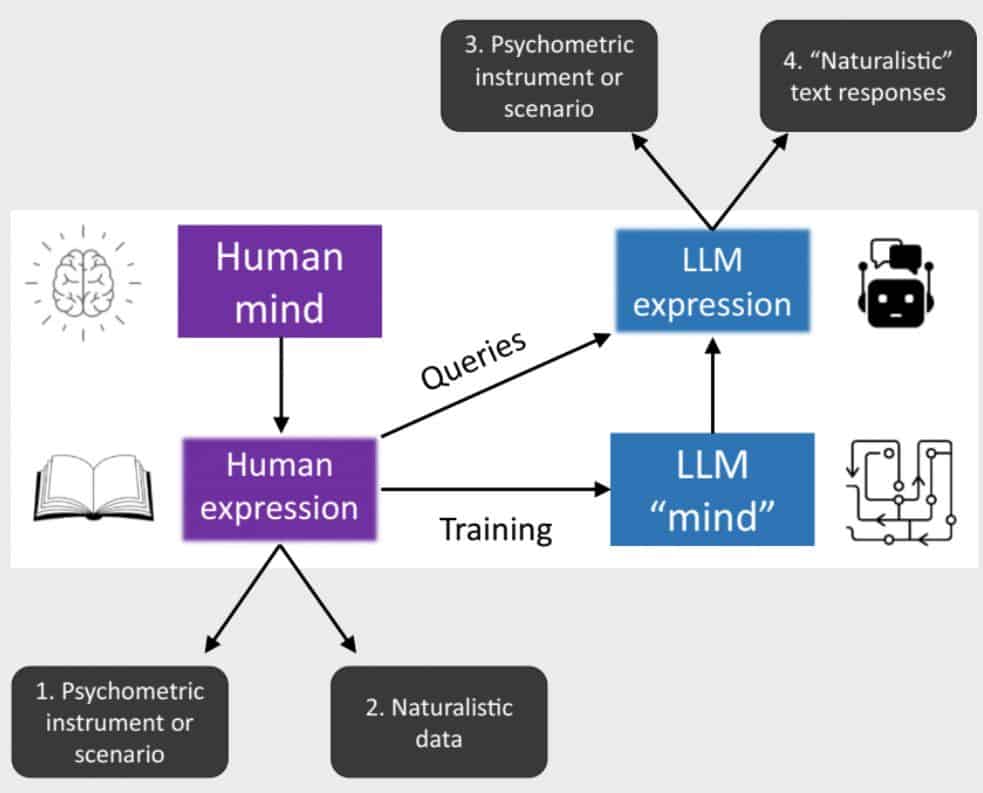

The chilling conclusion of a recent research study conducted entitled “Can AI language models replace human participants?” was that ChatGPT answers were 95% correlated with those made by people when participating in 464 morality tests. These tests explored topics like theft, murder, the Ultimatum game, the Milgram experiment, and electoral collisions, among others.

The results of this study suggest that AI language models can serve as proxies for human participants in many experiments. This means that AI may be able to ask complex questions and gauge moral responses without requiring direct engagement from human participants. This begs the question of whether, and to what extent, AI can accurately substitute human responses in such a case.

The study found that ChatGPT had a fantastically high correlation (0.95) between the social and moral judgments made by AI and people. This means that AI can make moral decisions and social judgments with a degree of precision comparable to those made by humans in the same test situation. While encouraging and promising that AI can make such precise judgments, it also raises questions regarding interpretation and hallucinations.

The interpretation of such decisions and judgments as to why an AI may have chosen one moral decision over another, for example, is an important part of the process which is not yet fully understood with AI. Similarly, AI is known to carry a tendency towards making plausible blizzards that can be difficult to counter. Fortunately, the study was carried out using ChatGPT 3.5; as AI develops and advances to version 4.0 and beyond, both of these problems should become easier to manage.

Ultimately, this study reveals how AI is developing and that it may be possible soon to use it to gain insight into the moral and social judgments of people and voters. As such, it is now more important than ever to ensure that those creating AI algorithms continue to employ ethical practices while developing AI. This study is a timely reminder that advances in AI can have far-reaching implications and should be managed with the utmost caution.

Read more about AI:

Disclaimer

In line with the Trust Project guidelines, please note that the information provided on this page is not intended to be and should not be interpreted as legal, tax, investment, financial, or any other form of advice. It is important to only invest what you can afford to lose and to seek independent financial advice if you have any doubts. For further information, we suggest referring to the terms and conditions as well as the help and support pages provided by the issuer or advertiser. MetaversePost is committed to accurate, unbiased reporting, but market conditions are subject to change without notice.

About The Author

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.

More articles

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.