Anthropic Proposes a ‘Contextual AI’ for Chat Models Based on 60 Principles

In Brief

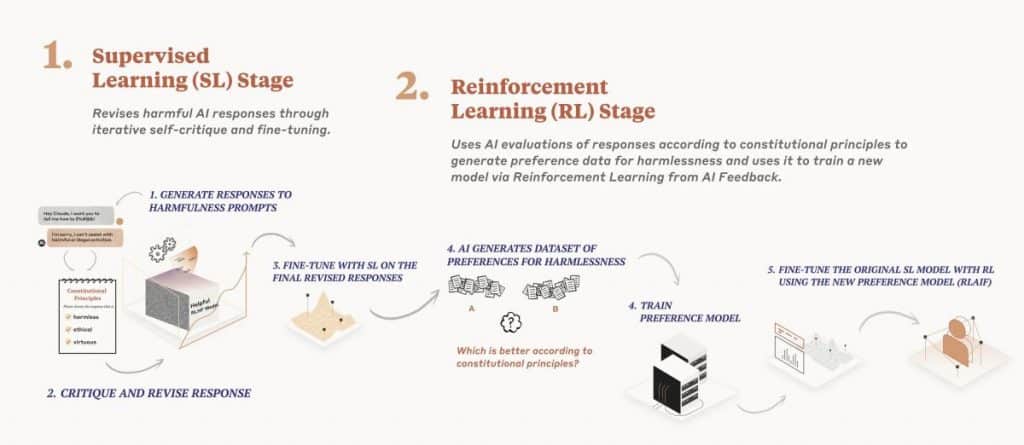

OpenAI uses reinforcement learning from human feedback (RLHF) to align language models with human principles, safety, and usefulness.

Anthropic proposed an alternative approach: contextual AI, which involves people writing a constitution that the model should follow.

This constitution is based on the United Nations Declaration of Human Rights, Apple’s Terms of Service, Principles Encouraging Consideration of Non-Western Perspectives.

Anthropic has proposed a new approach to training chat models using ‘Constitutional AI’. This method builds on OpenAI’s reinforcement learning from human feedback but builds upon it by avoiding the need to write deep training samples. Instead, the model is trained to respond to input through the use of a constitution which is meant to act as a set of laws for the model to follow.

| Recommended: Ex-OpenAI employees founded Anthropic, a business that has attracted over $700 million in financing |

Through this method, the AI can generate its own training samples by evaluating what it has said versus its set of legally accepted principles. This time-saving technique can be seen as Isaac Asimov‘s Laws of Robotics put into practice.

The principles which form the base of the model are too numerous to discuss in detail. However, they cover many topics, such as morality, risk aversion, economics, and artificial intelligence. Each has been developed in order to help guide the AI’s decisions when it comes to responding to conversational prompts.

Anthropic has managed to effectively train an AI model named Claude, which successfully competes with OpenAI’s ChatGPT. Using the Constitution-AI method, Claude could respond to conversational prompts at an impressive level of accuracy, but additional improvements are expected as Anthropic continues to build on this game-changing technology.

Indeed, this new approach has the potential to save time and money for companies that will no longer need to construct their own training samples. Rather, this ‘ready-made’ method can be used as a basis for creating custom-fit models- no programming knowledge is required. It is also important to note how this technology also promises to increase safety when it comes to conversational bots. Creating a set of legally accepted principles mitigates the risk of the AI going rogue.

Therefore, Constituation AI not only promises to make chat model development easier and quicker, but it will also make it safer. A win-win situation for the world of Artificial Intelligence and ChatBots alike.

An Analytical Look at Anthropic’s “Contextual AI” for Chatbots

Anthropic’s Contextual AI is based on incorporating more than 60 principles derived from the United Nations Declaration of Human Rights, Apple’s Terms of Service, Principles Encouraging Consideration of Non-Western Perspectives, Deepmind’s Sparrow Rules, and Anthropic Research Set 1 and Set 2.

The fact that AI can now be taught to behave according to principles derived from such an expansive and diverse array of sources is truly remarkable. By incorporating principles from the United Nations Declaration of Human Rights, for example, chatbot responses now reflect the importance of preserving the notion of freedom, equality, and brotherhood. Such principles are a vital component of ensuring that chatbot conversations remain ethical and respectful. Likewise, the incorporation of Apple’s Terms of Service ensures the chatbot considers the privacy interests of its users.

Principles Encouraging Consideration of Non-Western Perspectives also play an important role in the “Contextual AI” model. These principles reflect the need for AI to be respectful of other cultures and ensure that chatbot responses are not perceived as being harmful or offensive. Similarly, Deepmind’s Sparrow Rules dictate that the chatbot responds with responses intended to build a relationship with the user.

The incorporation of Anthropic Research Set 1 and Set 2 provides the final guarantee that AI conversations remain civil and respectful. The AI is trained to ensure that it answers questions in a thoughtful and courteous manner.

All in all, Anthropic’s “Contextual AI” model is an incredibly important breakthrough in the field of AI research. By allowing AI to be taught according to principles derived from such a diverse range of sources, the ethical implications of automated conversations are greatly improved.

Read more about AI:

Disclaimer

In line with the Trust Project guidelines, please note that the information provided on this page is not intended to be and should not be interpreted as legal, tax, investment, financial, or any other form of advice. It is important to only invest what you can afford to lose and to seek independent financial advice if you have any doubts. For further information, we suggest referring to the terms and conditions as well as the help and support pages provided by the issuer or advertiser. MetaversePost is committed to accurate, unbiased reporting, but market conditions are subject to change without notice.

About The Author

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.

More articles

Damir is the team leader, product manager, and editor at Metaverse Post, covering topics such as AI/ML, AGI, LLMs, Metaverse, and Web3-related fields. His articles attract a massive audience of over a million users every month. He appears to be an expert with 10 years of experience in SEO and digital marketing. Damir has been mentioned in Mashable, Wired, Cointelegraph, The New Yorker, Inside.com, Entrepreneur, BeInCrypto, and other publications. He travels between the UAE, Turkey, Russia, and the CIS as a digital nomad. Damir earned a bachelor's degree in physics, which he believes has given him the critical thinking skills needed to be successful in the ever-changing landscape of the internet.